Underwater Sonar Fusion for Enhanced 3D Reconstruction using Background Data and Voxel Initializer Mapping for AUV

ⓒ The Korean Sensors Society

This is an Open Access article distributed under the terms of the Creative Commons Attribution Non-Commercial License(https://creativecommons.org/licenses/by-nc/3.0/) which permits unrestricted non-commercial use, distribution, and reproduction in any medium, provided the original work is properly cited.

Abstract

This study proposes an underwater 3D object reconstruction method using forward looking sonar (FLS) and profiling sonar (PS) fusion through voxel-based space-carving. Underwater environments commonly rely on sonar sensors because of optical equipment limitations. However, the inherent dimensional ambiguity of conventional sonar systems prevents accurate object localization, necessitating sensor fusion approaches to achieve precise 3D reconstruction. The proposed methodology employs a complementary fusion strategy: FLS assigns probabilistic weights to all potential object regions considering vertical ambiguity, whereas PS complements this by executing conservative carving operations to remove regions where objects are confirmed absent. This approach enables comprehensive background and object mapping followed by object segmentation, unlike conventional region-of-interest-based reconstruction. Validation using ray-tracing simulation with objects (slope box) demonstrated that PS effectively carves away ambiguous vertical regions initially predicted by FLS, resulting in significantly improved 3D reconstruction accuracy. The results confirm that the proposed sensor fusion approach successfully resolves dimensional ambiguity inherent to single-sensor methods, achieving precise object localization through systematic probabilistic space-carving in underwater environments.

Keywords:

Occupancy grid map, Object 3D reconstruction, Multi-sonar fusion1. INTRODUCTION

Three-dimensional (3D) object reconstruction in underwater environments is crucial for marine exploration, underwater structure inspection, and seabed resource exploration. Consequently, extensive research has been conducted across these diverse fields [1-4]. However, the optical and electromagnetic sensors used in terrestrial applications cannot operate effectively underwater because of rapid signal attenuation and scattering in water. Therefore, acoustic sensors have become the primary choice for environmental sensing and navigation under challenging underwater conditions. Underwater surveying missions that employ autonomous underwater vehicles (AUVs) equipped with acoustic-based sonar sensors demonstrate superior performance in aquatic environments because sound waves propagate efficiently through water with significantly less attenuation compared to optical signals over considerable distances.

Among the various sonar types, forward-looking sonar (FLS) has emerged as the most promising for 3D reconstruction because of its high resolution and real-time imaging capabilities. However, FLS suffers from an inherent limitation: while it provides accurate horizontal information, it cannot determine the exact vertical position of the reflection points within its vertical field of view (FoV). This ambiguity leads to dimensional deficiency problems, leading to the generation of false slope artifacts in front of the objects during reconstruction. To compensate for FLS limitations, researchers have developed various approaches combining FLS with profiling sonar (PS) to supplement the missing dimensional information. Extensive research has been conducted in this multisensor fusion domain [5,6].

Conventional region-of-interest (ROI)-based approaches typically follow a sequential process: first, regions with a high probability of object detection are filtered through region of interest extraction. Subsequently, 3D coordinate transformation and reconstruction are applied to the filtered data from these detected regions [5,6]. However, this approach has critical limitations. Information acquisition is only possible when objects are in close proximity and are clearly distinguishable from background noise. In most underwater scenarios, the boundaries between objects and the background are ambiguous, resulting in the exclusion of substantial amounts of potentially valuable data during the filtering process. Additionally, these methods cannot effectively utilize seafloor information for comprehensive mapping because background data are systematically discarded rather than being integrated into the reconstruction process.

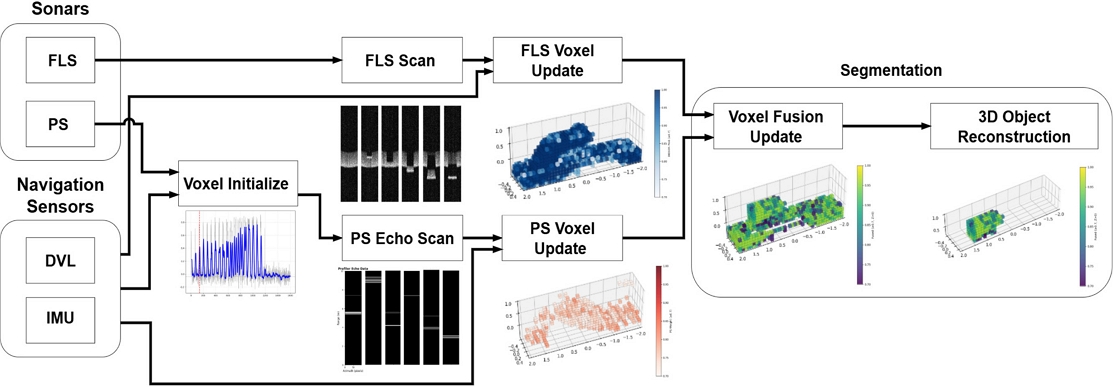

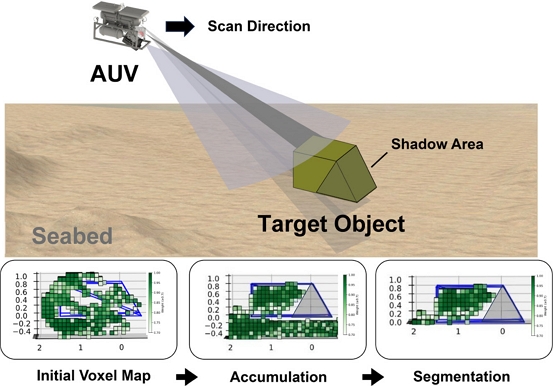

To overcome these limitations, this paper proposes a method that reverses the steps of traditional sequences, as illustrated in Fig. 1. Instead of requiring object detection before reconstruction, the proposed approach applies an immediate 3D coordinate transformation to all the sonar data. The method then constructs a comprehensive 3D scene representation through voxel-based probabilistic mapping, followed by object extraction from this complete representation. This systematic three-stage process (initial voxel mapping, accumulation, and segmentation) reconstructs background-included data and subsequently extracts objects through segmentation, which differs from conventional ROI-based methods that require prior object detection.

Underwater 3D Object Reconstruction Scenario and Proposed Method Sequence using AUV-mounted Sonar Systems.

The key advantage of this approach is that it utilizes sonar data from all distances without object detection constraints. Using the data from extended scanning ranges, the method naturally collects information from multiple viewpoints, providing a more comprehensive coverage of target objects and the surrounding environments. This comprehensive data collection effectively mitigates the false slope generation problem of the FLS while overcoming the constraints of conventional fusion methods. This represents the core contribution of this study, enabling robust 3D reconstruction performance for various object geometries approaching under different conditions.

The remainder of this paper is organized as follows. Section 2 presents the methodology for converting FLS and PS sensor data into occupancy grid maps using the proposed fusion approach. Section 3 describes the simulation environment, experimental validation, and performance analyses. Section 4 presents the conclusions and directions for future research.

2. METHOD

This study employed an occupancy grid mapping methodology [7,8] to implement the proposed approach, which constructs local maps before object extraction for comprehensive 3D scene reconstruction. The proposed method reconstructs 3D objects by combining the advantages of the FLS and PS sensors. Unlike existing approaches that impose conditional constraints for object detection, the unconditional data processing strategy of the proposed method makes it universally applicable.

The core implementation follows a space-carving concept inspired by [9]: FLS data assign positive weights to all potentially occupied regions in the vertical direction to compensate for the inherent ambiguity, whereas PS data carve false positive regions by marking them as free space. This approach enables robust 3D mapping without the limitations of the traditional ROI-based methods.

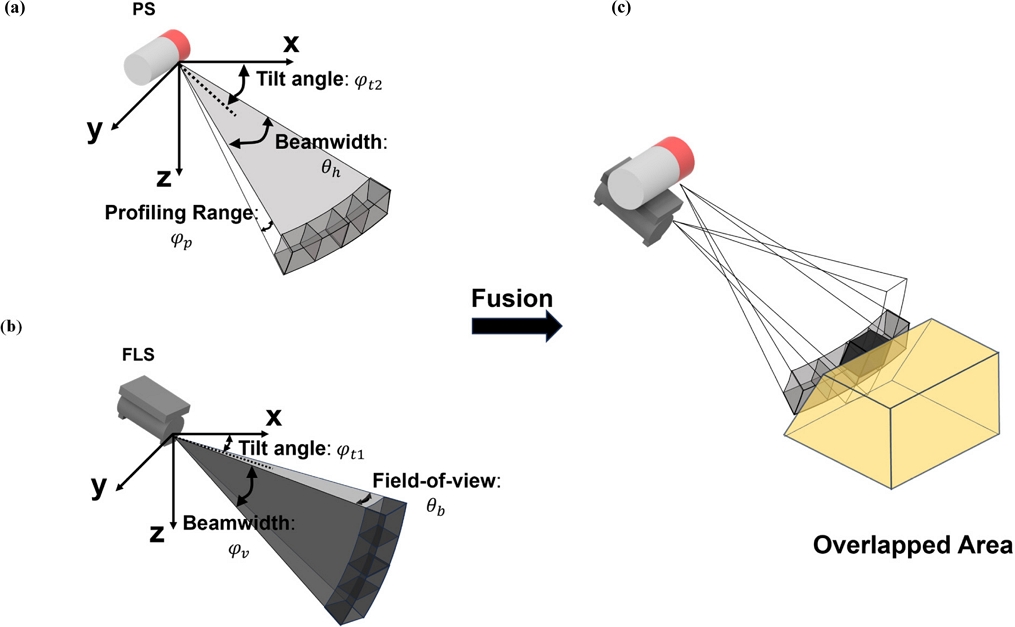

The core concept of the proposed method is illustrated in Fig. 2. As illustrated in Fig. 2 (b), FLS provides precise horizontal resolution along the FoV θb but suffers from vertical ambiguity within its beamwidth φv (dark gray cone area). This makes it impossible to determine the exact height of the object surfaces. Therefore, the candidate points must be generated at all possible positions in the vertical direction. In contrast, as shown in Fig. 2 (a), PS provides accurate vertical information through its profiling range φp but has horizontal ambiguity within its beamwidth θh due to its fan-shaped scanning pattern (light gray area). However, accurate vertical information from the PS can be used to remove (carve) regions where objects do not exist among the vertical candidate points generated by the FLS. Fig. 2 (c) demonstrates the fusion process, whereby the horizontal information (dark gray) of FLS and vertical constraints (light gray) of PS are combined to achieve a precise 3D reconstruction of the target object.

Occupancy grid mapping method overview: (a) PS geometric parameters, (b) FLS geometric parameters, (c) FLS+PS weight assignment.

To implement this fusion concept, the proposed method follows a four-stage process as illustrated in Fig. 3: (i) object detection and voxel map initialization using PS data (Section 2.1); (ii) FLS-based voxel map updates for potential object regions (Section 2.2); (iii) PS-based carving operations to refine the occupancy map (Section 2.3); and (iv) object extraction from the final 3D scene representation (Section 2.4). This four-stage approach enables the complete realization of the “local mapping before object extraction” strategy proposed in this work. Each stage was designed to leverage the complementary strengths of both sensors while minimizing their individual limitations. The detailed implementation and roles of each stage are described in the following subsections.

2.1 Object Detection and Voxel Map Initialization

Creating a local voxel map across an entire environment is highly inefficient in terms of data storage and computational load. As observed in OctoMap [10], sparse voxel allocation significantly improves memory efficiency and computational performance by generating voxels only at required locations. Based on this principle, this study adopted an active object detection approach to implement algorithms using PS sensor data, generating local voxel maps only when objects are detected, instead of maintaining complete maps throughout the scanning process.

The PS rotated about the vertical axis and recorded the sonar signal intensity in the direction of the sensor faces. Although a full 360-degree rotation is generally possible, this study scanned only within a limited angular range to solve the problem of excessively long scan cycles during full rotation.

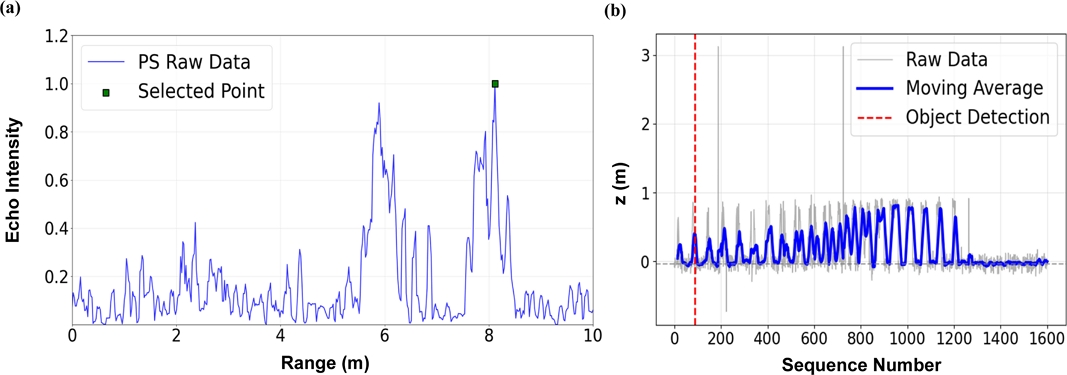

The object-detection algorithm using PS data is shown in Fig. 4. The data format in Fig. 4 (a) is from a single PS sequence, with the echo intensity recorded at all points within a distance of 0 m to the maximum range. The highest intensity point (marked with a green square) was identified as the selected point for that sequence. These selected points were then transformed from the local coordinate system of the sensor to global 3D coordinates by combining the position and orientation data of the AUV with the relative distance and angle measurements from the PS sensor. As shown in Fig. 4 (b), based on a repeated increase of the Z-coordinates of the selected points from each sequence, the system determines whether an object has been detected. In this process, when the PS detects an object, the system initializes a local voxel map with center at the location of the detected object. The voxel map covers a 10 × 10 × 10 m3 region with 100 × 100 × 100 resolution, providing a 10 cm voxel resolution for precise 3D mapping.

2.2 FLS Data-based Voxel Map Update

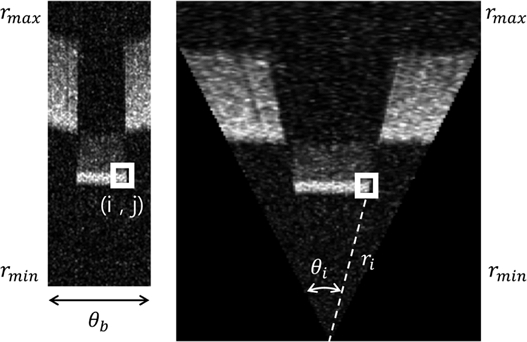

Once the voxel map is initialized through object detection, the system begins to update the voxel map using the FLS data. FLS sensors provide 2D image data, which are fundamentally acoustic data in polar coordinates converted into Cartesian coordinate images, as illustrated in Fig. 5. Here, the vertical pixel information corresponds to the distance from the sensor, and horizontal pixel information includes angular information corresponding to the individual beams.

This system does not convert all pixel data but selectively processes only pixels with high probability in object detection. Specifically, only pixels with intensity values exceeding the threshold of 150 (in the 0–255 intensity range) were considered as object detection data, and only these selected pixel indices (i, j) were processed for further computation. By selecting only high-intensity points, we accurately reconstructed the objects and seabed.

However, the pixels lack precise vertical information because of the inherent ambiguity of FLS. In Fig. 2 (b), the dark-gray shaded area represents this uncertainty zone, where the exact vertical position cannot be determined from a single measurement. To address this, we first converted the selected pixel indices (i, j) from Fig. 5 to physical measurements (ri, θi) using Eqs. (1) and (2), before including the geometric parameters of tilt angle φt1, beamwidth φv, and FoV θb. Coordinate conversion formulae were designed to address this vertical ambiguity.

| (1) |

| (2) |

| (3) |

| (4) |

Eqs. (1) and (2) convert image indices into physical measurements. Eq. (1) converts the range information from FLS image coordinates to actual distance measurements. Here rr is the distance from the sensor to the detected point. rmin and rmax are the minimum and maximum measurement distances of the sensor, respectively; h is the total number of vertical pixels; and ri is the range index of the image.

Eq. (2) converts the horizontal angular information from image coordinates into beam angles; θF represents the horizontal FoV; θi is the angular index of the image; and ω is the total number of beams (horizontal pixels).

These converted measurements are then used for the 3D coordinate transformation, as illustrated in Fig. 2 (b). The vertical ambiguity is addressed by generating sample points across the vertical FoV as in Eq. (3), where k represents the k-th possible vertical position; φmin and φmax define the vertical scan angle range; and Nv is the number of sampling points. This equation distributes possible object positions across the entire range of vertical ambiguity.

Eq. (4) transforms each 2D image coordinate (i, j) with multiple vertical k samples into the final 3D coordinates. The parameter k generates sample points distributed across the vertical ambiguity range, whereas φt1 represents the FLS mounting tilt angle, which is a fixed sensor installation parameter.

Based on the reconstructed 3D points, the occupancy grid map is updated using the log-odds method. For each detected 3D point P = (x, y, z, intensity), we calculated the weight only for the voxel containing this 3D point.

| (5) |

| (6) |

| (7) |

| (8) |

| (9) |

In Eq. (5), used to calculate ΔLo, Po is an adjustable parameter that determines the strength of the occupied space updates. To assign a stronger weight to occupied regions, Po is set closer to 1.0, whereas values closer to 0.5, provide more conservative updates.

For the FLS data processing, we updated only the voxels containing the detected 3D points in occupied spaces. Eq. (6) is the update formula where ΔLo is the update weight; αF adjusts the influence between PS and FLS updates; and IF is the intensity value normalized to between 0 and 1.

The calculated L(x, y, z) values are limited to the upper and lower bounds expressed in Eq. (8) for numerical stability, where the clamp function restricts the values to a specified range, as in Eq. (7). In Eq. (8), this clamp function constrains the log-odds values to the range [-5.0, 5.0], to prevent numerical overflow and maintain computational stability during iterative updates.

Finally, the L(x, y, z) values are converted back to probabilities through a sigmoid function using Eq. (9), to calculate the final occupancy probability.

2.3 PS Data-based Voxel Map Update

As explained in Section 2.1, strong intensity signals in the PS sensor data are not necessarily definitive evidence that they originate from objects. Existing sonar data processing methods include using the maximum echo [11], selecting multiple peaks [12], and using all values above certain thresholds. While these approaches work well for general object detection, a more conservative approach is required for voxel map updates, considering the irreversible nature of the carving operation.

The critical difference lies in the purpose of data utilization. In Section 2.1, we focused on object detection, which tolerates false positives, whereas voxel carving permanently removes potential object regions. The most dangerous scenario occurs when an object is located nearby, but a distant seafloor produces stronger reflection signals. In intensity-based selection methods such as maximum echo, the system selects the distant seafloor point and incorrectly carves away the space with actual nearby objects.

Therefore, this study adopted a conservative closest distance-based selection strategy instead of conventional intensity-based methods. This approach ensures that actual objects are not carved away, prioritizing preservation over aggressive removal.

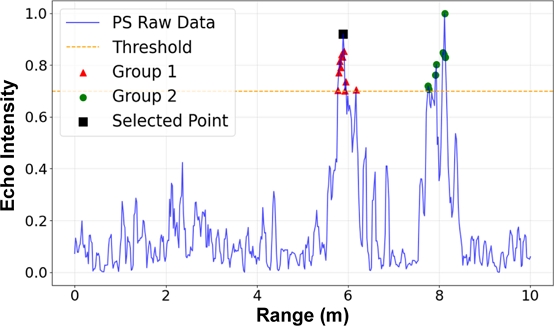

To implement closest distance-based selection on PS sensor data, we adopted a group-based selection method as illustrated in Fig. 6. The algorithm first applies a predefined threshold τ = 0.7 to select only high-reliability signals above this threshold (orange dashed line). These selected points are then sorted by distance and grouped, classifying consecutive points as belonging to the same group. Among all the formed groups, the group with the shortest distance measurements is selected. Within this closest group, the final detection point was determined by selecting the point with the highest signal intensity (marked with a black square). Through this process, a selected point was obtained from a single PS sequence.

The PS-based 3D coordinate conversion requires that the unique characteristics of the sensor be considered. As shown in Fig. 2 (a), the PS exhibits horizontal ambiguity (light gray fan-shaped area) while providing accurate vertical information, which is complementary to the FLS behavior. The geometric parameters including φt2, beamwidth θh, and profiling range φp define this sensing geometry. To handle the horizontal uncertainty, we distributed multiple candidate points across the ambiguous angular range at the selected distance rs:

| (10) |

| (11) |

Eq. (10) describes the horizontal angle sampling used to handle the inherent horizontal ambiguity of PS; θmin and θmax are the horizontal FoV angles of the PS sensor; Nh is the number of sampling points distributed across this angular range; and k is the sampling index.

Eq. (11) transforms the PS data into 3D coordinates. Here, rs is the chosen distance from the group-based selection method; φt2 is the PS sensor mounting tilt angle; φp is the PS profiling angle for the current measurement; and qh(k) represents the k-th horizontal angle sample. Parameter k generates multiple candidate positions across the horizontal uncertainty range.

To identify free-space voxels between the sensor and detected points, we applied the 3D Bresenham algorithm [13], which extends the traditional 2D ray-tracing method to three-dimensional space. The Bresenham algorithm efficiently determined all the voxels along the straight-line path from the sensor position to each detected PS point. Because the acoustic signal successfully traversed this region without obstruction, these intermediate voxels represent the confirmed free space.

To each voxel identified by the Bresenham algorithm along the ray path , a weight update was applied as follows:

| (12) |

| (13) |

The ΔLf is calculated according to Eq. (12), where Pf is an adjustable parameter that determines the strength of free space updates. To ensure conservative carving operations, Pf is typically set to less than 0.5, creating negative log-odds increments to mark voxels as free space.

Using Eq. (13), negative weight updates are applied with Lnew(x, y, z) and Lold(x, y, z) representing the updated and previous voxel occupancy weights, respectively. ΔLf is a negative value; αP controls the carving intensity; and t represents the distance from sensor to point normalized to the 0–1 range to implement distance-based weighting. The exponential function precludes strong negative weights near the detection point due to potential noise while generating stronger negative weights at farther distances, where we can be more confident that no objects exist.

Through this PS weight update process, ambiguous regions predicted by FLS as potential object locations were systematically carved away owing to the PS's accurate vertical information, to complete the sensor fusion approach.

2.4 Voxel Map Completion and Object Segmentation

After completing the iterative voxel map updates from the FLS and PS sensors, the system obtains a comprehensive 3D occupancy probability map of the target region. This final stage realizes the core concept of “local mapping before object extraction.” The process consists of two main operations: probability thresholding and object detection.

For probability thresholding, the system converted a probabilistic occupancy map into a binary classification. Voxels with occupancy probability values above 0.7 are classified as occupied space, whereas those below this threshold are considered free space. This threshold value was determined by fine-tuning the occupancy weight parameters to achieve optimal reconstruction performance. The resulting binary map provides a clear representation of the reconstructed 3D scene.

Object detection applies a moving average-based approach to the reconstructed voxel map, following the same principle as that of the object detection algorithm described in Section 2.1. The system computes a moving average of the Z-coordinates from the occupied voxels to establish a robust seafloor baseline resistant to noise and small objects. When consecutive voxel heights deviated significantly from the moving-average baseline, the system identified these regions as objects. This moving-average filtering effectively suppresses noise and minor seafloor variations, while maintaining sensitivity to genuine objects of interest.

Through this four-stage process, the proposed method successfully demonstrates the “background-included mapping followed by segmentation” approach. Compared to existing FLS+PS methods that rely on ROI-based object detection before reconstruction, the proposed approach is expected to achieve superior reconstruction performance. Although this approach is effective in the current simulation environment, real-world marine applications would require additional preprocessing stages to handle increased noise levels and filter out smaller objects that are not of interest for specific mission objectives. These improvements will be considered in future studies.

3. Simulation

3.1 Simulation Description

To validate the proposed method, we employed a ray tracing-based underwater acoustic simulator specifically designed for sonar simulations. The core operating principle of the simulator is based on ray tracing techniques [14], in which acoustic beams are represented as collections of rays. Object surfaces are modeled as triangular polygons; and the collisions between rays and polygons are detected. This simulator generates sonar data that closely resemble actual sonar images, thereby enabling a quantitative evaluation of algorithm performance. For FLS modeling, we utilized DIDSON specifications, whereas for PS modeling, 881A specifications were employed. The sensor specifications and experimental parameters used in our validation experiments are listed in Tables 1 and 2, respectively. Additional technical findings were reported in [15] and [16].

| (14) |

| (15) |

The mathematical implementation of the ray tracing information for the DIDSON and 881A specifications involves two key calculations: geometric intersection computation and acoustic intensity modeling. These key calculations enable accurate simulation of underwater acoustic propagation and reflection characteristics.

Additionally, to reproduce realistic sonar image characteristics, we applied speckle noise models that reflect the inherent acoustic properties of underwater environments [17,18]. The final intensity with added noise was calculated using a complex synthesis of coherent and incoherent echoes, as follows [18]:

| (16) |

Eq. (16) represents the final intensity calculation with speckle noise, where Ir is the measured intensity, which incorporates realistic noise characteristics. Through this comprehensive physical modeling approach, the simulator generated highly realistic sonar data that closely matched the actual underwater sonar characteristics, as shown in Fig. 7, which compares the real DIDSON data with our simulation results.

3.2 Simulation Setup

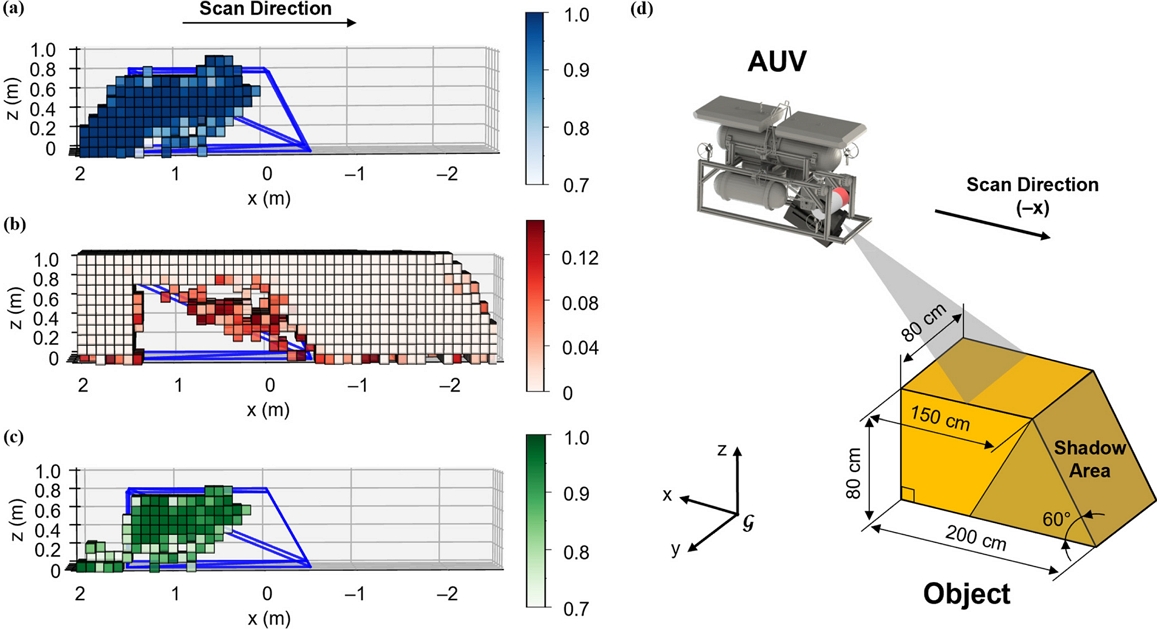

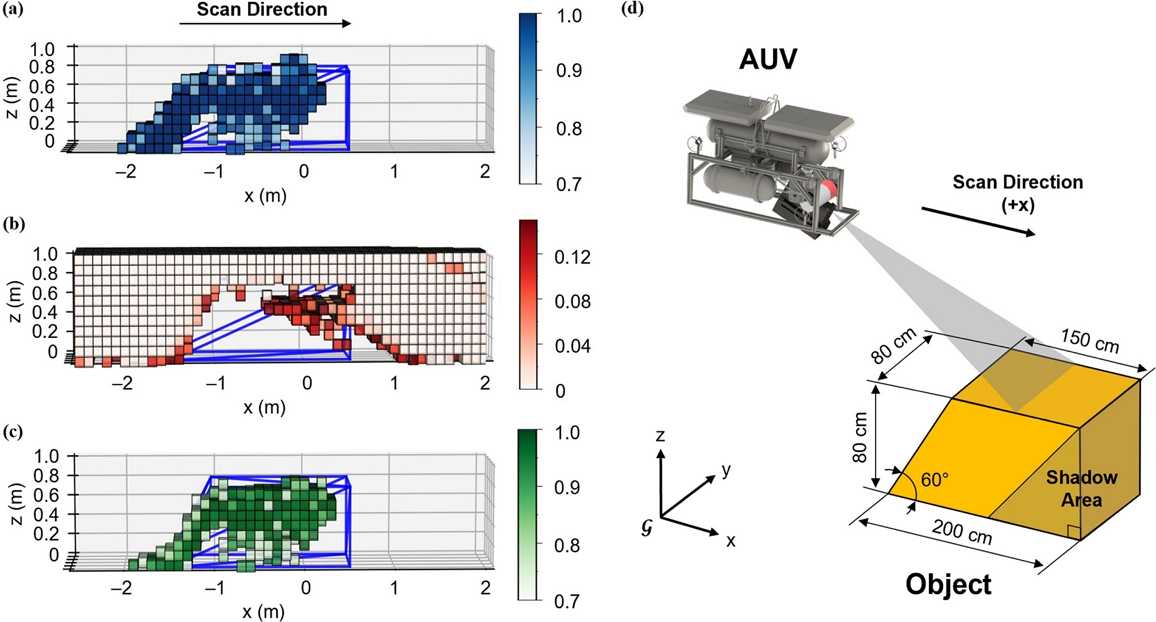

In the simulation environment, the target object was placed on a seafloor with slightly irregular surface characteristics. The test object was a sloped box with 90-degree and 60-degree angled surfaces, as shown in Figs. 8 (d) and 9 (d), respectively, specifically designed for validating false slope suppression and reconstruction accuracy. This object geometry represents a common challenge in underwater sonar reconstruction, where the FLS vertical ambiguity typically causes false slope artifacts that degrade reconstruction quality.

Reconstruction results for 90-degree slope box; (a) FLS-based positive weights, (b) PS-based space-carving, (c) Fusion weight update, and (d) Scan direction and object geometry.

Reconstruction results for 60-degree slope box; (a) FLS-based positive weights, (b) PS-based space-carving, (c) Fusion weight update, and (d) Scan direction and object geometry.

The AUV was configured with FLS and PS sensors positioned at the same location. Scanning operations were conducted by approaching the sloped box from two opposite directions (0° and 180°) at a constant altitude of 3.12 m above the seabed. This dual-angle scanning approach enables comprehensive data collection from opposite directions, providing the multi-perspective information required for robust 3D reconstruction.

The simulation executed 1600 sequences with a 5 mm advancement per sequence. FLS data were recorded every 4th sequence (400 frames per scan), whereas PS data were acquired in every sequence (1600 measurements per scan).

3.3 Results

To validate the proposed method, we compared the reconstruction results of the FLS-only approach and the proposed FLS+PS fusion method using a slope box modeling file as the ground truth reference.

The reconstruction results for a slope box at 90-degree orientation are presented in Fig. 8. The positive weights estimated by the FLS (DIDSON) in Fig. 8 (a), demonstrate the vertical ambiguity inherent in the FLS measurements, with potential object locations distributed across the entire vertical FoV. Fig. 8 (b) displays the carving process on PS data, removing uncertain regions through space-carving operations, which effectively eliminates the false-positive areas marked in red. Fig. 8 (c) presents the final fusion reconstruction result, which successfully combines both sensor outputs, showing clear object boundaries and an accurate geometric representation. This result highlights the fundamental advantages of the occupancy-grid method. Instead of treating each voxel independently, our approach interprets the spatial relationship between voxels using the entire sensor-to-object path to carve away the empty space. This allows a single measurement to simultaneously inform on the state of multiple voxels, thereby maximizing information usage. Accumulating weighted evidence from multiple measurements makes the method robust against noise and false positives, which typically challenge point-based approaches.

The reconstruction results for a 60-degree slope orientation are displayed in Fig. 9. Although this configuration generates fewer false slope artifacts than the 90-degree case, inaccuracies still occur in the FLS-only reconstruction. The results demonstrate that the precise vertical information in PS allows the remaining false slope regions to be removed through accurate space-carving operations.

An important observation in both reconstruction results is the presence of shadow areas, indicated by the darker yellow regions in the object geometry diagrams (Figs. 8 (d) and 9 (d)). These shadow areas represent regions where objects physically exist but remain undetected during voxel reconstruction. This phenomenon occurs because of the directional nature of sonar propagation, in which acoustic beams cannot penetrate solid objects to gather information regarding the occluded areas behind them. This acoustic shadowing is an inherent limitation of sonar-based reconstruction systems and does not indicate failure of the proposed method. When these naturally occluded shadow regions are excluded from the evaluation, the reconstruction results accurately capture the visible object surfaces and boundaries.

Through a direct comparison between the ground truth and reconstructed voxel data, the results clearly demonstrate that the proposed FLS+PS fusion method successfully removes significant amounts of uncertain regions compared with the FLS-only reconstruction. The subsequent results show that our method addresses critical limitations observed in prior work. Unlike previous approaches that generated severe false slope artifacts in 90-degree box reconstructions and struggled to accurately estimate angles for inclined objects such as 60-degree slopes, the proposed method successfully eliminated these false slope problems and achieved an angle estimation accuracy similar to the ground truth across different object orientations.

4. CONCLUSIONS

This study proposes an underwater sonar fusion method for enhanced 3D reconstruction via background data integration and local mapping using an AUV. To address the false slope limitations observed in existing approaches, we introduced a strategy that first comprehensively reconstructs the mapping, followed by object segmentation. This enables the utilization of more effective information, including background data, compared with conventional methods, to reduce and eliminate false slope artifacts. This method leverages FLS sensors to generate positive probabilistic weights across potential object regions utilizing the vertical ambiguity, whereas PS sensors complement this by executing conservative carving operations to remove regions where objects are confirmed to be absent through accurate vertical information. The proposed method was validated by employing a ray tracing-based simulator in which an AUV equipped with FLS and PS sensors was used to scan the target objects, and reconstruction was conducted using the proposed fusion approach. As demonstrated in the Results section, the method accurately carved away ambiguous regions from the FLS-only reconstruction following the actual object outlines. Future research will focus on preparing our method for real-world use in two ways. First, the reliability of the method will be improved by testing its performance over a wider range of horizontal angles, similar to the partial scans that occur during real missions. Second, the proposed approach will be tested in a more realistic underwater setting. In these environments, we will address common problems such as unclear boundaries between objects and their backgrounds, as well as high levels of sensor noise. This includes developing methods to fix the weight errors caused by imperfect data. Ultimately, this study will enable us to perform experiments to reconstruct completely unknown objects in actual underwater environments.

Acknowledgments

This work was supported by the Korea Agency for Infrastructure Technology Advancement (KAIA), funded by the Ministry of Land, Infrastructure, and Transport through the Korea Floating Infrastructure Research Center, Seoul National University, under Grant RS-2023-00250727.

References

-

N. Jaber, B. Wehbe, L. Christensen, F. Kirchner, MV3D: Multi-view 3D reconstruction of objects using forward-looking sonar, IEEE Robot. Autom. Lett. 10 (2025) 8762–8769.

[https://doi.org/10.1109/LRA.2025.3588062]

-

A. Cardaillac, M. Ludvigsen, Camera-sonar combination for improved underwater localization and mapping, IEEE Access 11 (2023) 123070–123079.

[https://doi.org/10.1109/ACCESS.2023.3329834]

-

S. Arnold, B. Wehbe, Spatial acoustic projection for 3D imaging sonar reconstruction, Proceedings of the IEEE Int. Conf. Robot. Autom. (ICRA), Philadelphia, USA, 2022, pp. 3054–3060.

[https://doi.org/10.1109/ICRA46639.2022.9812277]

-

M. Qadri, M. Kaess, I. Gkioulekas, Neural implicit surface reconstruction using imaging sonar, Proceedings of the IEEE Int. Conf. Robot. Autom. (ICRA), London, UK, 2023, pp. 1040–1047.

[https://doi.org/10.1109/ICRA48891.2023.10161206]

-

S. Rho, H. Joe, M. Sung, J. Kim, S. Kim, S.-C. Yu, Multi-sonar fusion-based precision underwater 3D reconstruction for optimal scan path planning of AUV, IEEE Access 13 (2025) 35157–35173.

[https://doi.org/10.1109/ACCESS.2025.3542084]

-

H. Joe, J. Kim, S.-C. Yu, Probabilistic 3D reconstruction using two sonar devices, Sensors 22 (2022) 2094.

[https://doi.org/10.3390/s22062094]

-

A. Elfes, Using occupancy grids for mobile robot perception and navigation, Computer 22 (1989) 46–57.

[https://doi.org/10.1109/2.30720]

-

H.P. Moravec, A. Elfes, High resolution maps from wide angle sonar, Proceedings of IEEE Int. Conf. Robot. Autom., St. Louis, USA, 1985, pp. 116–121.

[https://doi.org/10.1109/ROBOT.1985.1087316]

-

K.N. Kutulakos, S.M. Seitz, A theory of shape by space carving, Int. J. Comput. Vis. 38 (2000) 199–218.

[https://doi.org/10.1023/A:1008191222954]

-

A. Hornung, K.M. Wurm, M. Bennewitz, C. Stachniss, W. Burgard, OctoMap: An efficient probabilistic 3D mapping framework based on octrees, Auton. Robots 34 (2013) 189–206.

[https://doi.org/10.1007/s10514-012-9321-0]

-

B.R. Calder, L.A. Mayer, Automatic processing of high-rate, high-density multibeam echosounder data, Geochem. Geophys. Geosyst. 4 (2003) 1048.

[https://doi.org/10.1029/2002GC000486]

-

J.E. Hughes Clarke, L.A. Mayer, D.E. Wells, Shallow-Water Imaging Multibeam Sonars: A New Tool for Investigating Seafloor Processes in the Coastal Zone and on the Continental Shelf, Mar. Geophys. Res. 18 (1996) 607–629.

[https://doi.org/10.1007/BF00313877]

-

J.E. Bresenham, Algorithm for computer control of a digital plotter, IBM Syst. J. 4 (1965) 25–30.

[https://doi.org/10.1147/sj.41.0025]

- J. Kim, M. Sung, S.-C. Yu, Development of simulator for autonomous underwater vehicles utilizing underwater acoustic and optical sensing emulators, Proceedings of the 18th Int. Conf. Control Autom. Syst. (ICCAS), PyeongChang, Korea, 2018, pp. 416–419.

- Sound Metrics Corp., DIDSON 300m Acoustic Lens. http://www.soundmetrics.com/products/didson-sonars/DIDSON-300m, , 2024 (accessed 19 August 2025).

- Imagenex Technology Corp., 881A Imaging Sonar. https://imagenex.com/products/881a-imaging, , 2024 (accessed 19 August 2025).

-

Y. Huang, W. Li, F. Yuan, Speckle noise reduction in sonar image based on adaptive redundant dictionary, J. Mar. Sci. Eng. 8 (2020) 761.

[https://doi.org/10.3390/jmse8100761]

-

H. Joe, J. Kim, S.-C. Yu, 3D reconstruction using two sonar devices in a Monte-Carlo approach for AUV application, Int. J. Control Autom. Syst. 18 (2020) 587–596.

[https://doi.org/10.1007/s12555-019-0692-2]