Advances in Healthcare IoT Devices for AI Driven Clinical Decision Supporting System for Emergency Medicine

ⓒ The Korean Sensors Society

This is an Open Access article distributed under the terms of the Creative Commons Attribution Non-Commercial License(https://creativecommons.org/licenses/by-nc/3.0/) which permits unrestricted non-commercial use, distribution, and reproduction in any medium, provided the original work is properly cited.

Abstract

Advances in artificial intelligence (AI), the Internet of Things (IoT) and its healthcare-focused subset, the Internet of Medical Things (IoMT) are gradually improving the quality of emergency medicine, speeding up the diagnostic process, improving patient tracking and helping doctors make decisions in real time. This study carefully looks at the technological progress made between 2020 and Aug. 2025 in three main areas: telemedicine tools, wearable health monitoring systems, and point-of-care diagnostic devices. It shows how new technologies like AI built into wearables, cloud-connected remote diagnostics, CRISPR-based detection systems, and mobile-enabled biosensors have all made emergency response systems stronger. The research also looks at how deep learning models have changed over time, from traditional architectures to transformer-based networks, and how they might be used in clinical decision support systems. The combination of these systems with edge computing, federated learning, and image-based diagnostics has changed the way data is processed and acted upon in critical care situations. This analysis looks ahead at how AI-IoT convergence will affect emergency medicine in the future, the problems it will face when it is put into practice, and what the future direction will be.

Keywords:

Emergency medicine, Internet of Things (IoT), Internet of Medical Things (IoMT), Point-of-care (PoC) diagnostics, Clinical decision support systems (CDSS), Predictive analytics, Deep learning1. INTRODUCTION

The growing number of emergency medical cases worldwide, and the need for quick, data-driven decisions, have made it necessary to observe and reflect on how clinical work is performed using artificial intelligence (AI) and the Internet of Things (IoT). In healthcare, this includes a specialized subset, the Internet of Medical Things (IoMT), which extends the principles of IoT to medical devices and diagnostics. The combination of these technologies, especially in emergency medicine, has led to the creation of a new generation of smart diagnostic platforms that allow continuous monitoring, decentralized diagnostics, and predictive analytics at the point of care. Wearable health-monitoring systems, telemedicine tools, and point-of-care (PoC) diagnostic devices are the most important new technologies in this group.

Wearable IoT systems have come a long way from regular vital-sign monitors. They now have built-in AI for early detection and cloud-based alerts, which makes them proactive tools for patient management. Telemedicine has become an important component of remote diagnostics. AI-assisted stethoscopes, mobile ultrasound tools, and virtual assistants are changing the manner in which people in low-resource areas receive expert assistance. Simultaneously, the use of deep learning, CRISPR-Cas systems, and smartphone-based biosensors has made PoC diagnostics faster, smarter, and easier.

This review examines how these technologies have changed over time, from 2020 to 2025, and how they all come together in AI-driven clinical decision-support systems. This study also examines how deep learning, edge computing, and multimodal data fusion can change the way we diagnose, make decisions, and prepare for emergencies. The goal of this study was to provide a detailed account of how IoT-assisted AI platforms are changing the way emergency healthcare is delivered worldwide.

2. HEALTHCARE IOT DEVICES FOR EMERGENCY MEDICINE

2.1 Wearable Health Monitoring Systems

In the fast-paced and resource-sensitive environment of emergency medicine and care, wearable health monitoring systems integrated with IoMT technologies have emerged as effective and transformative solutions, enabling real-time, continuous physiological monitoring that can provide early warning signs for critical events such as sepsis, cardiac arrest, vital signs, or even respiratory failure. Over time, wearable health-monitoring technologies have progressed from simple tools for tracking vital signs to technologically advanced AI-integrated systems that can assist emergency physicians in making proactive decisions easily. These systems frequently utilize smart clothes, soft wearable patches that can be worn on the body, or wrist-worn devices equipped with advanced biosensors to monitor important body functions such as heart rate (HR), respiratory rate (RR), blood pressure (BP), oxygen saturation (SpO2), temperature, and sometimes even stress, and muscle activity. One major difference between the newest versions of these devices and those that preceded them is their integration of AI and ML. This allows the sensors to observe and point out concerns and problems on their own, and automatically send alerts. It is effective in emergency-based diagnoses.

Basic physiological parameters such as BP, SpO₂, temperature, HR, and RR were the main focus of early generation systems. These gadgets functioned as traditional monitoring systems for vital signs without the integration of embedded intelligence; they included textile-based sensors, adhesive patches, and wearable sensors, worn on the wrist. However, a foundation for wider adoption has been established through clinical validation studies. In one such study, a commercial wearable sensor that could continuously measure the temperature, HR, and RR was used to monitor 500 hospital patients. The results confirmed the clinical accuracy of the device, which showed a promising correlation with nurse-recorded vital signs (HR r = 0.86). Additionally, it was found that a 10 min averaging window reduced data noise and maximized alert precision, which is crucial in emergency situations where decision-making is directly impacted by signal clarity [1].

Another innovation took place in a low-resource setting, such as emergency rooms in Rwanda, where wearable biosensors were used to monitor patients with sepsis in places with low availability of materials and working staff. Even though there were problems, such as loose sensors and connections dropping out, the system provided constant and useful information about HR and RR. This validation shows that wearable monitors can be successfully used in international health settings, where traditional systems are often not useful [2].

As sensor accuracy and connectivity protocols improved, mid-generation wearables began to use machine learning algorithms to identify physiological problems on their own. The "MyWear" smart design introduced deep neural networks (DNNs) and sensors built into the fabric to constantly check for changes in heart rate, muscle activity, and stress levels. The system sends emergency alerts to health care providers and designated caregivers through a cloud-based platform when it finds something wrong. This device also allows health clinics to monitor chronic conditions by sending data to cloud servers. By being able to monitor everything through mobile apps, this effective method helps and encourages patients to use such platforms, making it easier for them and the healthcare providers to provide feedback to each other [3].

Wearables with built-in AI models that run directly on microcontroller systems tend to be a big step forward in wearable health-monitoring systems. The i-CardiAx chest patch, which was made at ETH Zurich, uses ultra-low-power accelerometers to obtain HR, RR, and BP data, and it has a quantized Temporal Convolutional Network (TCN) model that was trained on a large amount of clinical data to identify sepsis early. The microcontroller in the wearable device runs the model, which can send alerts up to 8.2 h before symptoms appear in a clinical setting. The device runs on a 100 mAh battery and can work for 432 h straight, sending data over Bluetooth for every cycle [4].

SepAl is another wearable device with built-in AI, which was used in places with few resources, such as field hospitals and rural clinics. It combines body temperature sensors, photoplethysmography (PPG), and inertial measurement units (IMUs) to generate six vital signs. It uses TinyML, a lightweight machine learning architecture, to run a temporal CNN model completely on the device to predict sepsis with a median lead-time of 9.8 h. SepAl is a useful tool for decentralized emergency care because its hardware is compact and it uses less energy [5].

The consideration and performance of wearable systems are not only dependent and bound to their design specifications but, more importantly, to their systemic architecture. IoT-assisted wearable health systems require secure cloud infrastructure, dependable wireless protocols, and effective data processing pipelines. Based on a thorough analysis [6], performance is determined by the efficiency of data communication, storage, and visualization in addition to sensor fidelity. Cloud-based dashboards for multi-patient trend analysis, edge computing for low-latency inference, and BLE for low-power transmission are all important factors. Research has identified important gaps, most notably the absence of standardized frameworks for the collection, compression, and interpretation of vital signs across similar devices. Closing these gaps is essential to create scalable platforms that can be used in various healthcare environments, ranging from mobile clinics to hospitals in urban areas [6,7].

Real-time decision-making is one of the many important considerations for health monitoring devices and it relies heavily on the quality of the user interface and visualization. One such platform (RemoteHealthConnect) compiles wearable data into a web-based dashboard and tracks body temperature, SpO₂, RR, HR, and BP. Physicians can compare patient data, monitor patient trends, and obtain automated alerts for unusual values. The platform's clinical viability and user-centric design are validated by its high System Usability Scale (SUS) score of 71.5, which is significantly higher than industry averages [8].

Despite being merged into emergency and healthcare settings, wearable monitoring systems face many obstacles. Data integrity can be jeopardized by motion artifacts, sensor detachment, and skin compatibility problems. Concerns regarding cybersecurity and data privacy remain very important, particularly as more devices send private data over public networks. To guarantee fair performance, the AI models incorporated into these systems must be verified across a range of demographics. Clinical adoption has been delayed by the absence of consistent standards for wearable technologies, which makes regulatory approval and interoperability even more difficult.

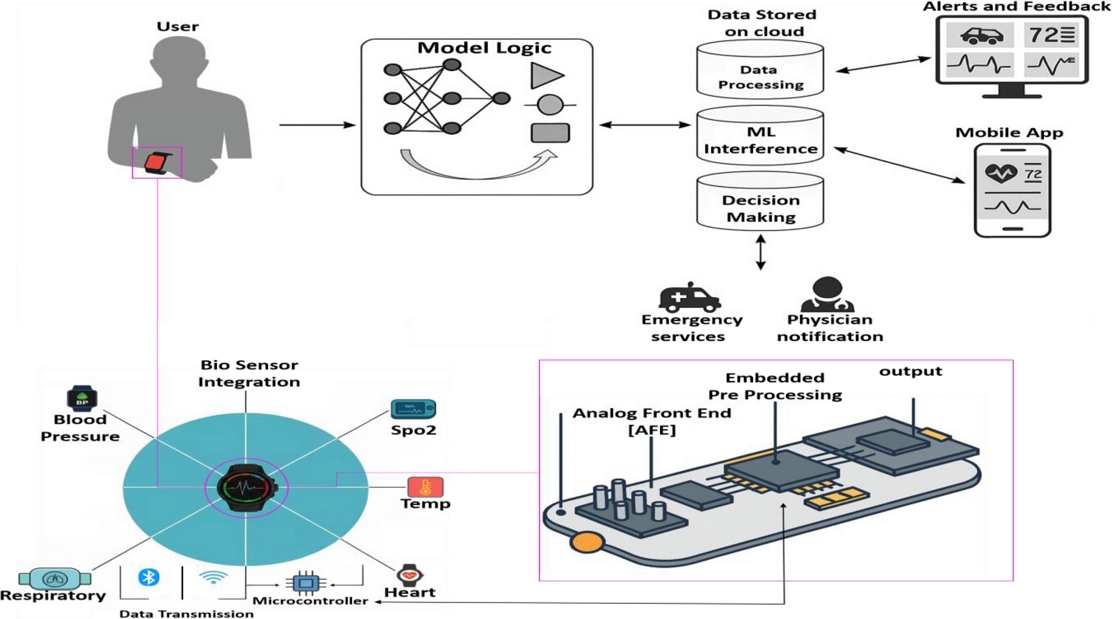

However, the direction of wearable medical technology suggests further innovations and integrations in the future. New developments in flexible electronics, nanomaterials, and battery miniaturization have opened up new design options. At the same time, advancements in federated learning and explainable AI are enhancing model security and algorithm transparency, which are essential for practical medical applications. Wearable technology is predicted to play an increasingly important role in modern medicine, as it develops from post-discharge monitors to proactive emergency triage assistants. Wearable IoMT technologies have the potential to become essential elements of emergency care worldwide with sustained interdisciplinary research and infrastructure investment. A wearable IoMT-based vital-sign monitoring system is schematically shown in Fig. 1, which illustrates how biosensors are integrated into a wrist-mounted gadget for real-time physiological tracking.

A schematic depiction of a wearable IoMT-based vital sign monitoring system featuring integrated biosensors for the continuous assessment of heart rate, respiratory rate, blood pressure, and oxygen saturation. This integration facilitates the early identification of critical events and enhances emergency response in both hospital and remote care environments.

2.2 Telemedicine Tools for Remote Diagnostics

Once viewed as an auxiliary service in the healthcare industry, telemedicine has undergone significant transformation in recent years and is now a fundamental component of emergency medicine diagnostic and decision-support frameworks. The need for quicker reaction times, less strain on clinical infrastructure, and fair access to healthcare, particularly in settings with limited resources or in remote locations, has propelled this change. Telemedicine tools are now essential parts of clinical decision support systems (CDSS), providing scalable solutions for expert care outside hospital walls. They are situated at the nexus of portable biomedical devices, real-time data transmission, and artificial intelligence. In acute care settings, telemedicine is becoming a more practical frontline tool for quick assessment, risk assessment, and triage with advancements in wearable sensors, edge computing, and mobile diagnostics combined with AI algorithms.

Even without onsite specialists, telemedicine platforms and portable diagnostic devices enable quick decision-making in high-pressure clinical situations, such as stroke, trauma, cardiac arrest, or respiratory distress where every minute counts and crucial diagnostics are necessary. By providing centralized medical resources to distant patients and first responders, these systems reduce the strain on emergency rooms and reduce the time it takes to receive treatment [9].

Advanced communication systems, AI-assisted diagnostics, and small biomedical devices are frequently used in modern telemedicine. Diagnostic capabilities that were previously limited to fully equipped hospitals are now possible using portable technologies, such as mobile biosensor hubs, tablet-based platforms, AI-enabled stethoscopes, and handheld ultrasound devices. In the case of underserved or disaster-affected areas, the increasing integration of machine-learning models into these devices makes it easier to detect diseases early, stratify risks, and provide intelligent triage support.

AI-enabled digital video consultation and simple communication systems were the mainstays of the first emergency telemedicine applications. Modern advancements, however, have moved toward on-the-go diagnostics, where devices not only send data but also decipher and act upon it. An example of this change is the increasing incorporation of AI into portable stethoscopes and diagnostic probe devices that can now conduct early screening for cardiovascular, respiratory, and obstetric conditions in urban hospitals and rural clinics. The use of AI-enabled stethoscopic instruments for maternal cardiac diagnostics in low-resource environments has marked a big change in this direction. A significant project involved the use of an AI-assisted digital stethoscope at a Nigerian medical facility to diagnose peripartum cardiomyopathy. By examining minute auscultatory patterns that conventional techniques frequently miss, the diagnostic model built into the stethoscope significantly increased the detection rate of heart failure. This tool offers high-sensitivity heart failure screening across multiple clinical sites and shows great promise for scalable deployment [10].

Growing research on these developments has proven useful when attempts have been made to expand the use of AI-powered auscultation tools in various hospital environments, focusing on the identification of decreased left ventricular ejection fraction (LVEF), a crucial sign of heart failure. Clinical professionals were able to match the diagnostic accuracy with conventional echocardiographic evaluations while working much more quickly and with fewer resources by incorporating deep learning algorithms into digital stethoscopes. This development underscores the importance of intelligent auscultation tools in frontline diagnostics by significantly increasing the accuracy of triage decisions and reducing unnecessary hospital transfers [11].

Low-cost Raspberry Pi-based stethoscopes are another significant development resulting from the push for widely deployable and reasonably priced telemedicine solutions. Convolutional neural networks (CNNs) and bidirectional dynamic feature recognition were used to design these small diagnostic instruments, which achieved a diagnostic accuracy of over 99% for 11 cardiopulmonary disorders. These instruments are suitable for emergency situations, rural locations, and mobile clinics owing to their small size, low power consumption, and remarkable precision. In contrast to large hospital-grade devices, these smart stethoscopes can be used in community clinics or ambulances, significantly increasing access to high-quality care [12].

Mobile remote presence systems have become increasingly popular in the development of telemedicine, concurrent with advancements in auscultation. One important study investigated the RP-Xpress system, which was an early demonstration of a remote presence tool tested in Bolivia. Through common 3G networks, this system made it easier to transmit stethoscope and ultrasound data in real time. Healthcare providers thousands of kilometers away could work with local providers to provide PoC diagnostics for trauma simulations and prenatal assessments. Even without reliable electricity or specialized staff, this setup proved that expert-guided diagnostics can be made accessible to the most underprivileged populations in the world with an appropriate design and communication infrastructure [13].

In response to the growing need for quick high-resolution imaging in emergency situations, handheld ultrasound platforms combined with 5G-enabled tablets have been developed to further improve real-time diagnostic capabilities. These systems allow emergency physicians to take diagnostic pictures and send them with low latency to distant specialists. By guaranteeing high-quality DICOM transmission and enabling video consultations, a 5G based system helps overcome earlier restrictions on image fidelity and transmission latency. These instruments are particularly important in disaster-affected areas and distant field hospitals, where prompt and precise imaging can dictate the emergency care plan [14].

Intelligent virtual assistants that can perform initial assessments of symptoms and triage through conversational interfaces represent another significant advancement in AI-driven telemedicine. In a clinical validation study, an AI-powered chatbot was used to gather patient responses and suggest care pathways. The virtual assistant showed a high degree of agreement with the doctor's decisions compared with real patient records. This development is especially important in rural areas where medical expertise is limited, and in high-volume settings where human triage staff may be overworked. Patients can more easily access diagnostic guidance thanks to these tools, which also reduce the workload for clinical staff [15].

Interest in AI-integrated teleradiology systems has grown because of the worldwide radiology shortage and increasing digitization of medical imaging. According to one review, deep learning has the potential to revolutionize medical image interpretation, particularly in remote locations with little radiological expertise. These systems reduce diagnostic delays by using AI for prescreening, annotating, and prioritizing cases according to urgency. This study also addresses important issues that need to be resolved to fully realize the potential of AI-driven teleradiology, including data privacy, model explainability, and integration with hospital information systems [16].

Finally, a systematic review is conducted to create a structured taxonomy that links diagnostic technologies with particular clinical applications to thoroughly assess the state of remote diagnostics in telemedicine. This review evaluated the accuracy and dependability of these systems and divided them into real-time and asynchronous categories. It also listed the main obstacles to implementation, including lack of personnel, restrictions on network infrastructure, and difficulties in data management. The significance of user-centered design, robust system architectures, and multi-stakeholder collaboration in creating resilient telemedicine ecosystems was highlighted in the recommendations made to direct future research [17].

Telemedicine tools have developed into invaluable resources for emergency and remote care by bridging the gap between specialized diagnostics and decentralized healthcare delivery. Even without on-site specialists, advances in telemedicine allow precise real-time assessments through AI-powered stethoscopes, handheld ultrasound systems, virtual assistants, and remote presence devices. Combining portable biomedical equipment with sophisticated communication networks and intelligent algorithms not only speeds up diagnostic procedures, but also guarantees fair access to high-quality care in underprivileged and disaster-affected areas. Interactions of clinicians with patients, emergency triage, and on-demand diagnostic resource deployment will be redefined as telemedicine systems are further developed and integrated with the cloud infrastructure and machine learning.

2.3 Point-of-Care Diagnostic Devices

One of the most prominent diagnostics that redefine medical care and emergency workflows is point-of-care diagnostic devices. They provide precise, quick, and actionable diagnostics to patients even in situations with limited resources. Recent advancements from 2020 to 2025 focus on small, intelligent, and networked systems for detection of infectious diseases, biochemical analysis, and vital biomarker quantification, building on the capabilities of wearable and telemedicine tools. By providing frontline healthcare providers with instant diagnostic insights, these innovations enable real-time monitoring, targeted interventions, and timely triage without depending on centralized laboratory facilities.

TIMESAVER, a highly effective point-of-care diagnostic system, uses a time-series deep-learning technique to improve the performance of lateral flow assays (LFAs) [18]. The system produced precise diagnostic results in 1–2 min by combining CNN-LSTM for feature extraction, YOLO for object detection, and a fully connected layer for classification. Compared with traditional LFA-based rapid kits, which usually take 10–15 min and are frequently constrained by subjective visual interpretation, this is a significant difference. More than 95% accuracy, sensitivity, and specificity were attained by TIMESAVER for a variety of biomarkers, such as hCG, COVID-19, Influenza A/B, and Troponin I. Its stability under a range of test conditions and compatibility with five distinct commercial LFA platforms highlight its potential for quick diagnosis in emergency or resource-constrained situations.

Sharing similar working traits, a smartphone-based immunosensor platform was developed for the quick detection of C-reactive protein (CRP), a vital biomarker of cardiovascular disease and inflammation. The system uses a 3D-printed optical module in line with a smartphone camera, an immunochromatographic strip, and a disposable microfluidic chip. The results were obtained in 15 min using an accompanying mobile application that performed real-time image processing and CRP quantification. Its potential to introduce laboratory-grade diagnostics into field settings was confirmed by validation trials that demonstrated a strong correlation with ELISA-based laboratory testing. This small and easily accessible device highlights the increasing viability of incorporating mobile technology into PoC diagnostics [19].

PoC testing has transformed the detection and management of vaccine-preventable viral infections (VPVIs) by enabling rapid and decentralized diagnostics. Innovations such as lateral flow assays, enzyme-linked immunosorbent assays (ELISA), microfluidic platforms, and molecular techniques such as nucleic acid amplification tests (NAATs) have significantly improved the sensitivity and speed of diagnosis at the point of care. CRISPR-based technologies and smartphone-integrated biosensors have recently improved PoC testing by enabling the high-specificity detection of pathogens such as dengue, mpox, hepatitis B virus (HBV), and SARS-CoV-2. These devices allow scalable deployment, real-time monitoring, and timely treatment decisions in resource-constrained environments. However, problems such as platform standardization, environmental sensitivity, and budgetary limitations still exist in low-income settings. Despite these challenges, POC diagnostic technologies are still evolving, and offer a promising path for improving global public health preparedness and monitoring outbreaks [20].

In addition, POC diagnostics have generally improved over the years through further integration of biosensor technologies. Piezoelectric, optical, and electrochemical biosensors having low infrastructure requirements and high sensitivity have shown great potential in identifying viral biomarkers. Using a wireless potentiostat and an Android interface, smartphone-enabled platforms, such as Portronicx, have successfully identified antigens for the dengue virus in less than 20 s, demonstrating the value of portable instruments in decentralized diagnostics. Furthermore, CRISPR-Cas systems such as Cas12 and Cas13 provide highly specific and programmable viral nucleic acid detection, yielding real-time results in remote locations. Although there are still issues with these technologies, such as off-target effects and the requirement for nucleic acid pre-amplification, continuous advancements are being made to streamline processes and increase precision. Collectively, these new strategies support the importance of POC devices in addressing diagnostic inequities and facilitating prompt clinical responses worldwide [21].

Another distinct PoC platform based on CRISPR-Cas12a-assisted detection was introduced for SARS-CoV-2 diagnosis in clinical and community settings [22]. This system uses recombinase polymerase amplification (RPA) and an optimized single-guide RNA for isothermal nucleic acid amplification. Fluorescent probes cleaved using activated Cas12a enzymes enabled visual confirmation within 40 min. Clinical validation demonstrated 92.3% sensitivity and 100% specificity, with a detection threshold of 10² copies/μL. The simplified workflow, high accuracy, and portability of the system make it ideal for decentralized testing, including home-based or drive-through diagnostics during pandemic outbreaks [22]. Parallelly, a colorimetric lateral flow immunoassay with AI has been proposed by researchers to increase the sensitivity of antigen detection [23]. Standard nitrocellulose test strips were used in the system; however, machine learning-based image analysis algorithms that could detect minute colorimetric changes were used instead of human vision. This improved detection limit drastically decreased the number of false negatives. Clinical trials have verified the alignment with laboratory-grade results, indicating that this AI-enhanced format has great potential in environments with limited resources. Particularly, in mobile health deployment, the AI layer offers a scalable model for standardizing interpretations [23].

In recent years, multiplexing has gained considerable attention. Myoglobin, CK-MB, and heart-type fatty acid-binding protein (FABP) are three important cardiac biomarkers that can be simultaneously detected using a deep learning-assisted vertical flow assay (fxVFA) [24]. A miniature 3D-printed cartridge containing fluorescent conjugated polymer nanoparticles was used as the paper-based platform. The assay was completed in 15 min and only 50 μL of serum was required. Fluorescence signals were recorded using a smartphone camera and a neural network trained on serum data was used to interpret the signals. The platform exhibited less than 15% variability, sub-ng/mL sensitivity, and an R2 value greater than 0.9. Its potential for real-time cardiac diagnostics in ambulances, remote clinics, and disaster areas was highlighted by its small form-factor and analytical stability [24].

Finally, a thorough analysis of point-of-care biosensor technologies highlighted the importance of facilitating quick COVID-19 diagnosis using optical, electrochemical, and microfluidic detection techniques. Examples include microfluidic electrochemical sensors that can detect viral antigens in picograms per milliliter and are based on reduced graphene oxide and gold nanoparticles functionalized with cysteamine. This review highlights the advantages of these biosensors, including their speed, portability, and compatibility with mobile devices. It also highlights the current difficulties in field deployment, regulatory approval, and large-scale manufacturing. These results highlight the importance of converting cutting-edge biosensor technologies into practical and scalable diagnostic instruments [25].

All these developments in point-of-care diagnostic technology show a clear move toward quicker, more portable, and more intelligent testing platforms that can provide clinical-caliber accuracy outside of conventional laboratory settings. Next-generation POC devices address important issues in emergency diagnostics, especially in settings with limited resources or high demand, by combining machine learning algorithms, innovative biosensing materials, and smartphone interfaces. To guarantee prompt, decentralized, and fair access to life-saving diagnostics, the smooth integration of these technologies into larger healthcare systems is crucial as they develop further.

3. AI MODELS FOR CLINICAL DECISION SUPPORTING SYSTEM

3.1 Deep Learning Models for Disease Predictive Analytics

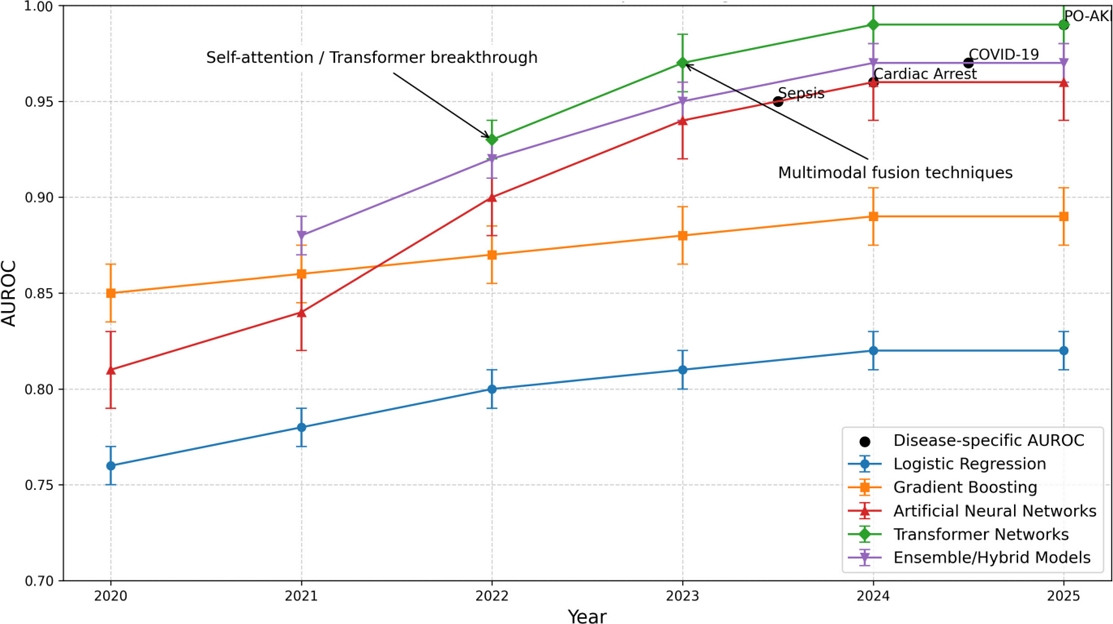

Over the past five years, DL models have transformed disease predictive analytics, particularly by utilizing multimodal patient data and vital sign assessments. In the early years, classic machine learning techniques, such as logistic regression and gradient boosting, utilized a broad array of clinical variables and provided moderate predictive accuracy for both acute and chronic diseases [26,27]. The implementation of recurrent neural networks (RNNs) and artificial neural networks (ANNs) has significantly impacted the domain by effectively capturing temporal dependencies in patient data, elevating AUROC scores to 0.88 for conditions such as cardiovascular disease and COVID-19 [28-30]. By 2023, attention mechanisms and transformer-based architectures have improved, allowing the precise, real-time prediction of major outcomes, such as sepsis, cardiac arrest, and intensive care unit admission, frequently using only three or four vital signs [31-33]. These models indicated a significant increase in accuracy and clinical utility, including expanded prediction windows of up to 30 h and AUROC values of 0.99 [31,32,34,35].

The increased effectiveness and adaptability of the DL models are notable discoveries in this study. In early disease diagnosis, including postoperative acute kidney damage and hypertension-related mortality, ensemble and hybrid models, such as those that integrate preoperative and intraoperative data or combine LSTM and attention mechanisms, repeatedly outperform standard techniques [28,30,36]. Predictive accuracy and generalizability have been further improved by the integration of multimodal data sources such as wearable sensors, genomics, imaging, and EHRs [33,35,37-39]. For example, it was observed that deep learning models trained on large and diverse datasets (such as 237,059 ED visits) performed better than logistic regression models, particularly when continuous and real-time data streams were added [31,34,40]. Furthermore, although there is still room for development, the persistent issue of model interpretability has been addressed through the application of complex feature importance and explainability approaches, such as SHAP values, D-SHAP, and attention visualization [30,36,39].

The variety of diseases covered by these models is astounding. DL approaches have been successfully applied to chronic diseases (COPD, diabetes, and hepatocellular carcinoma), acute events (sepsis, cardiac arrest, and COVID-19), and even rare conditions using both structured and unstructured data from large, diverse populations [29,33,39,40]. Disease-specific research has shown the versatility of these models. For example, in postoperative AKI risk models, intraoperative vital sign signals were found to be the most significant predictors, whereas in patients with nonalcoholic fatty liver disease, time-varying covariates and transfer learning improved the prediction of hepatocellular carcinoma [30,38]. Furthermore, it has been shown that DL-based systems reduce clinical workload by automating prediction tasks and integrating seamlessly with hospital EHR systems, allowing for timely intervention and customized care [32,33,35].

Despite this progress, several challenges remain. External validation revealed performance drops of up to 30% when the models were applied to new populations, underscoring the need for more diverse and representative training datasets [26,34,35]. Although explainability has improved, the complexity of modern architectures, such as transformers and ensembles, may hinder clinical trust and adoption [30,39]. Several studies have suggested a greater interaction between data scientists, clinicians, and healthcare administrators to fully utilize DL in the predictive analytics of diseases. Finally, the incorporation of these models into real-world clinical workflows remains challenging [33,35,40].

Fig. 2 illustrates how the AUROC scores of several predictive models used in disease analytics increased annually between 2020 and 2025. This demonstrates that traditional models such as logistic regression and gradient boosting perform moderately and steadily, whereas artificial neural networks, transformer networks, and ensemble models show significant improvements, reaching AUROC values as high as 0.99. Notable improvements in model effectiveness that coincide with state-of-the-art innovations, such as the introduction of self-attention and multimodal fusion, have underscored the rapid evolution and superior accuracy of advanced deep learning techniques in recent years. The error bars display the variations in performance reported in different studies.

The year-wise AUROC performance of deep learning models from 2020 to 2025 indicates consistent accuracy with traditional methods and significant enhancements with neural networks, transformer architectures, and hybrid ensembles.

Table 1 illustrates that conventional models like logistic regression and gradient boosting yield moderate AUROC values (0.76–0.89) with shorter prediction windows and require more vital sign inputs (usually 10 or more). In contrast, the ensemble/hybrid models, transformer networks, and artificial neural networks showed significantly higher AUROC values (up to 0.99), used fewer vital signs (as few as 3–5), and provided longer prediction windows of up to 30 h. Notably, newer frameworks also use refined explainability techniques, indicating a move toward improved clinical interpretability and enhanced predictive performance.

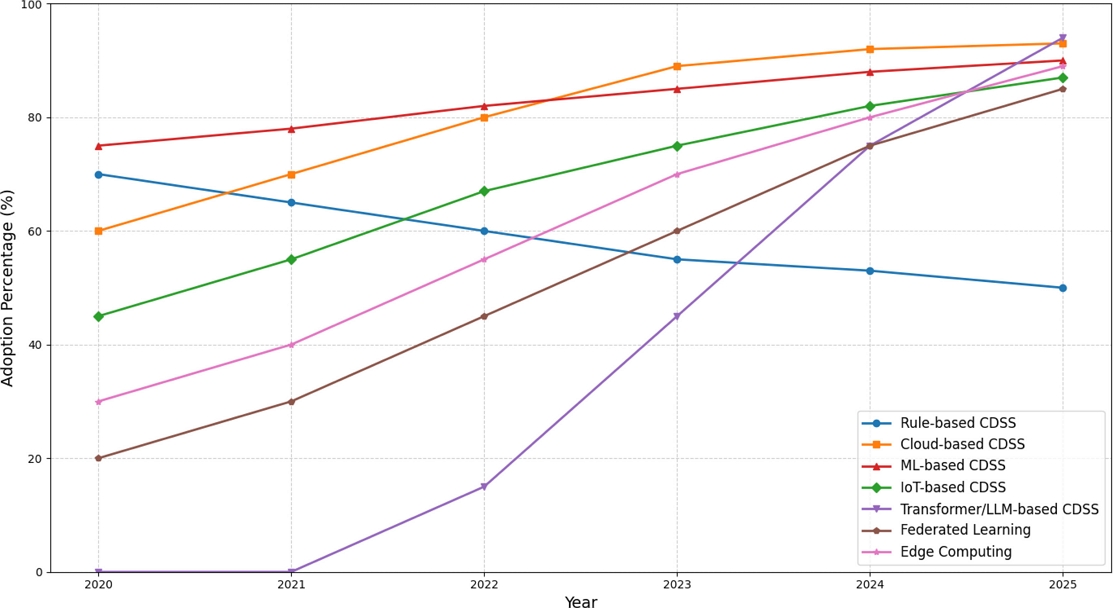

3.2 Real-Time Decision Support Systems

From 2020 to 2025, real-time clinical decision support systems shifted from traditional rule-based architectures to sophisticated AI-driven platforms that can process continuous data streams and provide prompt clinical advice [41,42]. As healthcare institutions move toward more sophisticated solutions, the adoption of traditional rule-based CDSS, which predominated in the early part of this period, gradually decreased from 70% to 50% [43]. The adoption rate of cloud-based CDSS has increased from 60% to 93%, largely owing to the COVID-19 pandemic. In addition to providing healthcare organizations with scalable, real-time decision support tools, this eliminates the need for large investments in on-premise infrastructure [44].

During this time, machine learning-based systems have shown steady development, progressing from simple supervised learning models that achieved 75% effectiveness in 2020 to sophisticated ensemble approaches that reached 90% by 2025 [45-47]. Simultaneously, as clinical settings increasingly leveraged continuous data streams from wearables, sensors, and monitoring equipment to provide real-time health assessments, IoT-integrated systems saw rapid adoption, increasing from 45% to 87% [48].

Clinical decision support systems can now process multimodal patient data in real time owing to the integration of Internet of Things platforms. Studies have shown that IoT-enabled CDSS can detect diseases with 95.1% accuracy and support healthcare by facilitating safer and quicker preventive care [45,48].

The most significant technological change in clinical decision support history occurred between 2022 and 2025, when transformers and large language model-based CDSS emerged, growing from 0% adoption to 94% by 2025 [49-52]. Research has demonstrated that LLM-enhanced CDSS achieved prediction accuracies of up to 89.7% for clinical outcomes while processing up to 42,000 clinical variables simultaneously. These sophisticated AI-based systems demonstrated superior capabilities in processing clinical narratives, producing personalized treatment recommendations, and offering explainable clinical reasoning [53].

Federated learning, which allows collaborative model training across healthcare institutions without jeopardizing patient data privacy, has grown from 20% adoption in 2021 to 85% by 2025. Studies have shown that the performance of federated learning-based CDSS can be comparable to that of centralized systems, while still adhering to the HIPAA and GDPR regulations. These studies used LLaMA-3 models that offer real-time recommendations based on the specific circumstances of each patient [54,55].

With edge-based AI systems reaching processing times of less than two minutes for real-time monitoring applications, adoption of edge computing has increased from 30% to 89%, allowing for real-time inference and lowering the latency for crucial clinical decisions[56,57]. The convergence of these technologies by 2025 will create a new paradigm, whereby edge-deployed, privacy-preserving, AI-driven clinical decision support systems will become the norm, significantly altering how medical professionals access and use real-time clinical intelligence, as shown in Fig. 3.

3.3 Image Recognition and Diagnostics

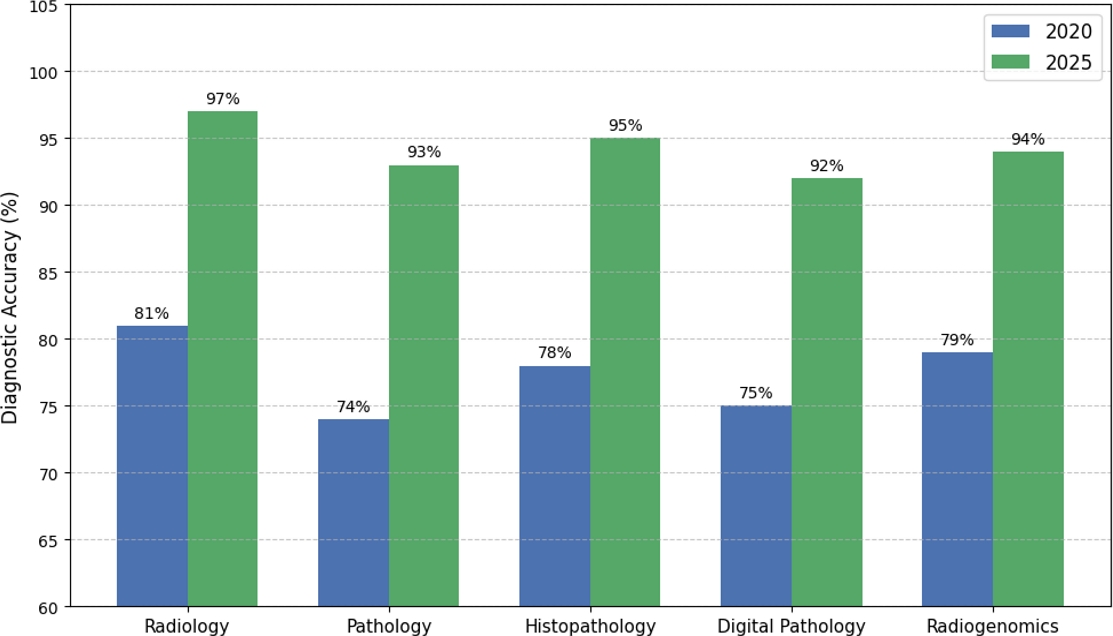

Incorporation of image recognition technologies into clinical decision support systems is a noteworthy development that builds upon the predictive analytics and real-time monitoring capabilities established in previous AI advancements in healthcare. In recent years, there has been a significant transformation in the way medical imaging data are processed and used within CDSS frameworks, from traditional rule-based diagnostic support to advanced AI-driven platforms capable of real-time image analysis and clinical decision-making. Following its early implementation, studies have shown that the AI-CDSS can successfully integrate computer vision techniques to improve radiological interpretation, with diagnostic accuracies ranging from 81% to 99.7% across an array of imaging modalities [58,59].

While maintaining real-time processing capabilities suitable for clinical workflows, advanced deep-learning architectures, including CNNs and RNN-LSTM networks, have achieved 95.1% accuracy in disease detection[60,61]. Significant performance gains were observed when specialized frameworks for medical imaging CDSS optimization were developed. Radiomics, which enables the extraction of quantitative features from standard-of-care imaging that can predict treatment responses, disease progression, and molecular characteristics, has emerged as a particularly powerful technique for clinical decision support, with AUC values ranging from 0.79 to 0.99 [62]. By providing physicians with evidence-based recommendations based on in-depth image analysis and patient-specific clinical data, these systems have successfully bridged the gap between modern AI-driven clinical decision making and traditional medical imaging interpretation.

Through the development of image-integrated CDSS between 2022 and 2025, advances in multimodal data processing and clinical decision support have made it possible for imaging analysis to be seamlessly integrated with comprehensive patient management systems. Impressive diagnostic performance has been demonstrated by AI-driven clinical decision support systems for complex conditions such as oral cancer. Deep learning models have been shown to classify oral squamous cell carcinoma from histopathological images with 93.2% accuracy and to combine clinical, imaging, and molecular data to provide individualized treatment recommendations [58].

The development of radiogenomic clinical decision support systems was a significant advancement that allowed the integration of genomic profiles and imaging phenotypes to guide personalized cancer treatment decisions and predict clinically significant disease-free survival outcomes [63]. Digital pathology applications within CDSS frameworks have had a significant clinical impact, as AI systems have demonstrated their ability to analyze entire slide images for diagnostic support, while maintaining integration with current clinical workflows and electronic health record systems [64,65]. For specific applications, such as breast cancer detection systems, advanced ensemble deep learning techniques yielded impressive performance metrics with 0.97 AUC and 98.12% accuracy, along with explainable decision-making processes that boosted adoption by clinicians and trust [66].

Fig. 4 shows a comparison of the diagnostic accuracies of radiology, pathology, histopathology, digital pathology, and radiogenomics. The significant increase in accuracy across all modalities demonstrates the impact of incorporating AI and deep learning into clinical diagnostic procedures. User-centered design principles for AI-CDSS deployment were developed to ensure that image recognition capabilities were seamlessly integrated into clinical workflows without disrupting established diagnostic procedures [67], or overcoming major barriers to adoption. The convergence of generative AI technologies with clinical decision support systems has enabled new capabilities such as automated report generation, enhanced decision explanation mechanisms that improve clinical interpretability and diagnostic accuracy, and synthetic data augmentation for training [65,68]. Owing to these advancements, image recognition has become an essential component of comprehensive CDSS platforms, including clinical decision support, temporal data analysis, and visual pattern recognition as integrated intelligent systems that drastically change diagnostic workflows from reactive image interpretation to proactive AI-enhanced clinical decision-making processes.

The comparison of diagnostic accuracy among radiology, pathology, histopathology, digital pathology, and radiogenomics post-AI integration reveals significant performance improvements.

Recent progress in artificial intelligence has reshaped disease prediction, diagnosis, and decision support, leading to considerable improvements in healthcare systems. By analyzing clinical data and vital signs in real-time, deep learning models have advanced from conventional techniques to effective transformer networks and ensembles, thereby significantly increasing the accuracy and aiding the early identification of serious medical conditions. Simultaneously, sophisticated cloud-based platforms powered by AI replaced rule-based real-time clinical decision support systems. These platforms can integrate massive multimodal health-data streams and provide physicians with rapid and practical insights.

Simultaneously, these mechanisms have been upgraded with advanced image recognition technologies that allow for automated, highly precise interpretation of medical images. All these advancements have combined to change healthcare from dispersed and reactive approaches to a proactive, integrated, and intelligent care ecosystem, resulting in an innovative period in which diagnosis, intervention, and prediction are all readily available and interconnected at the point of care.

4. DISCUSSION

Although CDSS and AI-integrated healthcare IoT devices have great potential for emergency medicine, a number of drawbacks must be noted. First, data security and privacy continue to be major issues. Sensitive patient data may be vulnerable to cyberattacks or unauthorized access as wearable sensors, telemedicine tools, and point-of-care devices increasingly depend on cloud platforms and wireless transmission. Ensuring compliance with regulations such as HIPAA and GDPR across various healthcare contexts remains a significant challenge despite the introduction of techniques such as federated learning and encryption protocols [69].

Second, large-scale deployment is hampered by the absence of platform and device standardization and interoperability. Proprietary formats are frequently used for data collection and transmission, wearable biosensors, telemedicine systems, and AI-based PoC devices. This fragmentation slows down regulatory approval and makes it difficult for institutions to share data seamlessly. To guarantee reproducibility and scalability in clinical practice, there is an urgent need for standardized benchmarks for device validation and unified frameworks for data collection [70].

Another crucial point is that the adoption is hampered by model explainability and clinician trust. Transparency in clinical settings is limited because many of the most complex predictive models such as transformer-based architectures and hybrid ensembles are accurate. Clinicians may hesitate to rely on AI recommendations for important decisions if the output is not interpretable. Although more work is needed to incorporate these into real-time CDSS, recent developments in explainable AI, such as attention visualization, feature attribution, and hybrid symbolic–statistical approaches, offer promising avenues for increasing trustworthiness [68].

Finally, it is important to highlight the limitations of equity and generalizability. The majority of models are trained using data from particular healthcare systems and populations; however, when used in environments with limited resources or new demographics, their performance frequently suffers. To prevent bias and guarantee that AI-enabled IoMT systems will benefit all patient populations, more varied datasets are required, including those from low-resource and global health contexts.

5. CONCLUSION

The field of emergency medicine has changed between 2020 and 2025 because of the integration of AI-driven CDSS with healthcare IoT devices. Intelligent on-body platforms that can transmit physiological data in real time and identify early warning signs have evolved from wearable monitoring tools. Similarly, telemedicine has developed into a strong diagnostic channel that provides specialized care in underserved and disaster-affected areas owing to mobile devices, cloud infrastructures, and conversational AI interfaces. Concurrently, decentralized critical condition detection has become possible with speed and accuracy that were previously unheard-of, owing to the rapid development of PoC diagnostics, which is made possible by deep-learning algorithms, biosensors, and mobile integration.

The development of deep-learning models has been a key factor in these developments. With fewer inputs and longer prediction windows, explainable AI frameworks, hybrid ensembles, and transformer-based architectures have made it possible enable extremely accurate and interpretable predictions. The increasing accessibility, scalability, and personalization of emergency care are demonstrated by the broad use of real-time, cloud-enabled, and edge-deployed CDSS platforms in conjunction with privacy-preserving techniques such as federated learning.

In the future, a more integrated, proactive, and equitable healthcare ecosystem may be possible owing to the ongoing convergence of wearable biosensors, telemedicine platforms, and complex AI algorithms.

Acknowledgments

This research was supported by a grant from the Korean Health Technology R&D Project through the Korea Health Industry Development Institute (KHIDI), funded by the Ministry of Health & Welfare, Republic of Korea(grant number: RS-2023-00302149), and by the Technology Innovation Program (or Industrial Strategic Technology Development Program) (20017903, Development of medical combination device for active precise delivery of embolic beads for transcatheter arterial chemoembolization and simulator for embolization training to cure liver tumors), funded by the Ministry of Trade, Industry & Energy(MOTIE, Korea). This work was also supported by the Institute of Information & Communications Technology Planning & Evaluation (IITP) under the Artificial Intelligence Convergence Innovation Human Resources Development (IITP-2023-RS-2023-00256629) grant funded by the Korean Government (MSIT).

References

-

M. Joshi, F.M. Iqbal, M. Sharabiani, H. Ashrafian, S. Arora, K. McAndrew, et al., Performance of Continuous Digital Monitoring of Vital Signs with a Wearable Sensor in Acute Hospital Settings, Sensors 25 (2025) 2644.

[https://doi.org/10.3390/s25092644]

-

S.C. Garbern, G. Mbanjumucyo, C. Umuhoza, V.K. Sharma, J. Mackey, O. Tang, et al., Validation of a wearable biosensor device for vital sign monitoring in septic emergency department patients in Rwanda, Digit. Health 5 (2019) 1–11.

[https://doi.org/10.1177/2055207619879349]

- S.C. Sethuraman, P. Kompally, S.P. Mohanty, U. Choppali, MyWear: A Smart Wear for Continuous Body Vital Monitoring and Emergency Alert, arXiv., arXiv:2010.08866, (2020).

-

K. Dheman, M. Giordano, C. Thomas, P. Schilk, M. Magno, i-CardiAx: Wearable IoT-Driven System for Early Sepsis Detection Through Long-Term Vital Sign Monitoring, arXiv., arXiv:2407.21433, (2024).

[https://doi.org/10.1109/IoTDI61053.2024.00013]

-

M. Giordano, K. Dheman, M. Magno, SepAl: Sepsis Alerts on Low-Power Wearables with Digital Biomarkers and On-Device Tiny Machine Learning, IEEE Sens. J. 25 (2025) 7858–7866.

[https://doi.org/10.1109/JSEN.2024.3424655]

-

S.D. Mamdiwar, A. R, Z. Shakruwala, U. Chadha, K. Srinivasan, C.-Y. Chang, Recent Advances on IoT-Assisted Wearable Sensor Systems for Healthcare Monitoring, Biosensors 11 (2021) 372.

[https://doi.org/10.3390/bios11100372]

-

A. Andrade, A.T. Cabral, R.P. Mendes, E.G. Pereira, V.H. Delbem, M.P. Santos, IoT-based vital sign monitoring: A literature review, Smart Health 32 (2024) 100462.

[https://doi.org/10.1016/j.smhl.2024.100462]

-

S. Arun, E.R. Sykes, S. Tanbeer, RemoteHealthConnect: Innovating patient monitoring with wearable technology and custom visualization, Digit. Health 10 (2024) 1–20.

[https://doi.org/10.1177/20552076241300748]

-

A. Haleem, M. Javaid, R.P. Singh, R. Suman, Telemedicine for healthcare: Capabilities, features, barriers, and applications, Sens. Int. 2 (2021) 100117.

[https://doi.org/10.1016/j.sintl.2021.100117]

-

D. Adedinsewo, A.C. Morales-Lara, H. Hardway, P. Johnson, K.A. Young, W.T. Garzon-Siatoya, et al., Artificial intelligence-based screening for cardiomyopathy in an obstetric population: A pilot study, Cardiovasc. Digit. Health J. 5 (2024) 132–140.

[https://doi.org/10.1016/j.cvdhj.2024.03.005]

-

P. Bachtiger, C.F. Petri, F.E. Scott, S.R. Park, M.A. Kelshiker, H.K. Sahemey, et al., Point-of-care screening for heart failure with reduced ejection fraction using artificial intelligence during ECG-enabled stethoscope examination in London, UK: a prospective, observational, multicentre study, Lancet Digit. Health 4 (2022) e117–e125.

[https://doi.org/10.1016/S2589-7500(21)00256-9]

-

M. Zhang, M. Li, L. Guo, J. Liu, A Low-Cost AI-Empowered Stethoscope and a Lightweight Model for Detecting Cardiac and Respiratory Diseases from Lung and Heart Auscultation Sounds, Sensors 23 (2023) 2591.

[https://doi.org/10.3390/s23052591]

-

I. Mendez, M. C. Van Den Hof, Mobile remote-presence devices for point-of-care health care delivery, Can. Med. Assoc. J. 185 (2013) 1512–1516.

[https://doi.org/10.1503/cmaj.120223]

-

M. Berlet, T. Vogel, M. Gharba, J. Eichinger, E. Schulz, H. Friess, et al., Emergency Telemedicine Mobile Ultrasounds Using a 5G-Enabled Application: Development and Usability Study, JMIR Form. Res. 6 (2022) e36824.

[https://doi.org/10.2196/36824]

-

J.W. Dexheimer, E.M. Borycki, Use of mobile devices in the emergency department: A scoping review, Health Informatics J. 21 (2015) 306–315.

[https://doi.org/10.1177/1460458214530137]

-

S. Sikander, P. Biswas, P. Kulkarni, Recent advancements in telemedicine: Surgical, diagnostic and consultation devices, Biomed. Eng. Adv. 6 (2023) 100096.

[https://doi.org/10.1016/j.bea.2023.100096]

-

S.S. Mohsin, O.H. Salman, A.A. Jasim, M.A. Al-Nouman, A.R. Kairaldeen, A systematic review on the roles of remote diagnosis in telemedicine system: Coherent taxonomy, insights, recommendations, and open research directions for intelligent healthcare solutions, Artif. Intell. Med. 160 (2025) 103057.

[https://doi.org/10.1016/j.artmed.2024.103057]

-

S. Lee, B. Kim, S. Yoo, C. Lee, H. Lee, S. Kim, et al., Rapid deep learning-assisted predictive diagnostics for point-of-care testing, Nat. Commun. 15 (2024) 1695.

[https://doi.org/10.1038/s41467-024-46069-2]

-

K. Lakshmanan, B.M. Liu, Impact of Point-of-Care Testing on Diagnosis, Treatment, and Surveillance of Vaccine-Preventable Viral Infections, Diagnostics 15 (2025) 123.

[https://doi.org/10.3390/diagnostics15020123]

-

G.R. Han, S.Y. Park, J.H. Kim, M.C. Lee, D.W. Choi, H.S. Jung, et al., Machine learning in point-of-care testing: innovations, challenges, and opportunities, Nat. Commun. 16 (2025) 1234.

[https://doi.org/10.1038/s41467-025-58527-6]

-

M. Shang, J. Guo, J. Guo, Point-of-care testing of infectious diseases: recent advances, Sens. Diagn. 2 (2023) 1201-1218.

[https://doi.org/10.1039/D3SD00092C]

-

A. Parihar, P. Ranjan, S.K. Sanghi, A.K. Srivastava, R. Khan, Point-of-Care Biosensor-Based Diagnosis of COVID-19 Holds Promise to Combat Current and Future Pandemics, ACS Appl. Bio Mater. 3 (2020) 7326–7343.

[https://doi.org/10.1021/acsabm.0c01083]

-

G.-R. Han, A. Goncharov, M. Eryilmaz, S. Ye, H.-A. Joung, R. Ghosh, et al., Deep learning-enhanced chemiluminescence vertical flow assay for high-sensitivity cardiac troponin I testing, Small 21 (2025) 2411585.

[https://doi.org/10.1002/smll.202411585]

- A. Goncharov, G.-R. Han, M. Eryilmaz, S. Ye, H.-A. Joung, R. Ghosh, et al., Deep learning-enabled multiplexed point-of-care sensor using a paper-based fluorescence vertical flow assay, ACS Nano 18 (2024) 27449–27464.

-

S. Jiang, Y. Wang, X. Liu, Z. Chen, L. Zhang, Q. Wu, et al., A Point-of-Care Testing Device Utilizing Graphene-Enhanced Fiber Optic SPR Sensor for Real-Time Detection of Infectious Pathogens, Biosensors 13 (2023) 1029.

[https://doi.org/10.3390/bios13121029]

-

S. Jahandideh, G. Ozavci, B.W. Sahle, A.Z. Kouzani, F. Magrabi, T. Bucknall, Evaluation of machine learning-based models for prediction of clinical deterioration: A systematic literature review, Int. J. Med. Inform. 175 (2023) 105084.

[https://doi.org/10.1016/j.ijmedinf.2023.105084]

-

A. Choudhury, O. Asan, Role of Artificial Intelligence in Patient Safety Outcomes: Systematic Literature Review, JMIR Med. Inform. 8 (2020) e18599.

[https://doi.org/10.2196/18599]

-

M.A. Morid, O.R.L. Sheng, J. Dunbar, Time Series Prediction Using Deep Learning Methods in Healthcare, ACM Trans. Manag. Inf. Syst. 14 (2023) 1–29.

[https://doi.org/10.1145/3531326]

-

J.Q. Sheng, P.J.H. Hu, X. Liu, T.S. Huang, Y.H. Chen, Predictive Analytics for Care and Management of Patients with Acute Diseases: Deep Learning-Based Method to Predict Crucial Complication Phenotypes, J. Med. Internet Res. 23 (2021) e18372.

[https://doi.org/10.2196/18372]

-

A. Amirahmadi, M. Ohlsson, K. Etminani, Deep learning prediction models based on EHR trajectories: A systematic review, J. Biomed. Inform. 144 (2023) 104430.

[https://doi.org/10.1016/j.jbi.2023.104430]

-

A. Choi, K. Lee, H. Hyun, K.J. Kim, B. Ahn, K H. Lee, et al., A novel deep learning algorithm for real-time prediction of clinical deterioration in the emergency department for a multimodal clinical decision support system, Sci. Rep. 14 (2024) 30116.

[https://doi.org/10.1038/s41598-024-80268-7]

-

Y. Jeon, Y.S. Kim, W. Jang, J.D. Park, B. Lee, Development of a deep learning model that predicts critical events of pediatric patients admitted to general wards, Sci. Rep. 14 (2024) 3870.

[https://doi.org/10.1038/s41598-024-55528-1]

-

A. Al Kuwaiti, K. Nazer, A. Al-Reedy, S. Al-Shehri, A Review of the Role of Artificial Intelligence in Healthcare, J. Pers. Med. 13 (2023) 951.

[https://doi.org/10.3390/jpm13060951]

-

A. Singh, Predictive Analytics and Early Disease Detection: Using Deep Learning Models to Predict the Onset and Progression of Diseases Based on Historical and Real-Time Data, SSRN Electron. J. (2024) 1–15.

[https://doi.org/10.2139/ssrn.5196927]

-

D. Dixon, R. Sharma, A. Patel, M. Johnson, K. Williams, Unveiling the Influence of AI Predictive Analytics on Patient Outcomes: A Comprehensive Narrative Review, Cureus 16 (2024) e59954.

[https://doi.org/10.7759/cureus.59954]

-

S. Zhang, S. Ding, Z. Xu, J. Ye, Machine Learning-Based Mortality Prediction in Critically Ill Patients with Hypertension: Comparative Analysis, Fairness, and Interpretability, medRxiv., (2024).

[https://doi.org/10.1101/2025.04.05.25325307]

-

A. Garcés-Jiménez, M.L. Polo-Luque, J.A. Gómez-Pulido, D. Rodríguez-Puyol, J.M. Gómez-Pulido, Predictive health monitoring: Leveraging artificial intelligence for early detection of infectious diseases in nursing home residents through discontinuous vital signs analysis, Comput. Biol. Med. 174 (2024) 108469.

[https://doi.org/10.1016/j.compbiomed.2024.108469]

-

A.P. Zhao, L. Chen, M. Wang, Y. Liu, X. Zhang, H. Li, AI for science: Predicting infectious diseases, J. Saf. Sci. Resil. 5 (2024) 130–146.

[https://doi.org/10.1016/j.jnlssr.2024.02.002]

-

Y. Wang, L. Liu, C. Wang, Trends in using deep learning algorithms in biomedical prediction systems, Front. Neurosci. 17 (20023) 1256351.

[https://doi.org/10.3389/fnins.2023.1256351]

-

Y. Liu, B. Wang, Advanced applications in chronic disease monitoring using IoT mobile sensing device data, machine learning algorithms and frame theory: a systematic review, Front. Public Health 13 (2025) 1510456.

[https://doi.org/10.3389/fpubh.2025.1510456]

-

M. Elhaddad, S. Hamam, AI-Driven Clinical Decision Support Systems: An Ongoing Pursuit of Potential, Cureus 16 (2024) e57728.

[https://doi.org/10.7759/cureus.57728]

-

Z. Chen, N. Liang, H. Zhang, H. Li, Y. Yang, X. Zong, et al., Harnessing the power of clinical decision support systems: challenges and opportunities, Open Heart 10 (2023) e002432.

[https://doi.org/10.1136/openhrt-2023-002432]

-

L. Wang, X. Chen, L. Zhang, L. Li, Y. Huang, Y. Sun, et al., Artificial intelligence in clinical decision support systems for oncology, Int. J. Med. Sci. 20 (2023) 79-86.

[https://doi.org/10.7150/ijms.77205]

-

I. Ficili, M. Giacobbe, G. Tricomi, A. Puliafito, From Sensors to Data Intelligence: Leveraging IoT, Cloud, and Edge Computing with AI, Sensors 25 (2025) 1763.

[https://doi.org/10.3390/s25061763]

-

S.A. Alsuhibany, S. Abdel-Khalek, A. Algarni, A. Fayomi, D. Gupta, et al., Ensemble of Deep Learning Based Clinical Decision Support System for Chronic Kidney Disease Diagnosis in Medical Internet of Things Environment, Comput. Intell. Neurosci. (2021) 4931450.

[https://doi.org/10.1155/2021/4931450]

-

A.C.S. Robert Vincent, S. Sengan, Effective clinical decision support implementation using a multi filter and wrapper optimisation model for Internet of Things based healthcare data, Sci. Rep. 14 (2024) 20698.

[https://doi.org/10.1038/s41598-024-71726-3]

-

A. Choi, K. Lee, H.J. Kim, S.Y. Choi, J.H. Kim, H.S. Lee, Development of a machine learning-based clinical decision support system to predict clinical deterioration in patients visiting the emergency department, Sci. Rep. 13 (2023) 8589.

[https://doi.org/10.1038/s41598-023-35617-3]

-

R. Vincent, A.C.S. Robert, S. Sengan, K. Shankar, IoT-Cloud-Based Smart Healthcare Monitoring System for Heart Disease Prediction via Deep Learning, Electronics 11 (2022) 2292.

[https://doi.org/10.3390/electronics11152292]

- A.A. Rahman, P. Agarwal, R. Noumeir, P. Jouvet, V. Michalski, S.E. Kahou, Empowering Clinicians with Medical Decision Transformers: A Framework for Sepsis Treatment, arXiv., arXiv:2407.19380, (2024).

-

H.N. Cho, D.M. Keselman, M.G. Rosen-Zvi, S.S. Ong, M.B. McCoy, Task-Specific Transformer-Based Language Models in Health Care: Scoping Review, JMIR Med. Inform. 12 (2024) e49724.

[https://doi.org/10.2196/49724]

-

D. Oniani, X. Wu, S. Visweswaran, S. Kapoor, S. Kooragayalu, K. Polanska, et al., Enhancing Large Language Models for Clinical Decision Support by Incorporating Clinical Practice Guidelines, arXiv., arXiv:2401.11120, (2024).

[https://doi.org/10.1109/ICHI61247.2024.00111]

-

F. Gaber, M. Shaik, A. Akalin, Evaluating large language model workflows in clinical decision support for triage and referral and diagnosis, NPJ Digit. Med. 8 (2025) 263.

[https://doi.org/10.1038/s41746-025-01684-1]

-

T.H. Lin, H.Y. Chung, M.J. Jian, C.K. Chang, C.L. Perng, G.S. Liao, et al., An Advanced Machine Learning Model for a Web-Based Artificial Intelligence-Based Clinical Decision Support System Application: Model Development and Validation Study, J. Med. Internet Res. 26 (2024) e56022.

[https://doi.org/10.2196/56022]

-

A. Rahman, M.S. Hossain, G. Muhammad, D. Kundu, T. Debnath, M. Rahman, et al., Federated learning-based AI approaches in smart healthcare: concepts, taxonomies, challenges and open issues, Cluster Comput. 26 (2023) 2271–2311.

[https://doi.org/10.1007/s10586-022-03658-4]

-

C.M. Thwal, K. Thar, Y.L. Tun, C.S. Hong, Attention on personalized clinical decision support system: Federated learning approach, Proceedings of the 2021 IEEE International Conference on Big Data and Smart Computing (BigComp), Jeju Island, Korea (South), pp. 141–147.

[https://doi.org/10.1109/BigComp51126.2021.00035]

- M. Alkan, I. Zakariyya, S. Leighton, K.B. Sivangi, C. Anagnostopoulos, F. Deligianni, Artificial Intelligence-Driven Clinical Decision Support Systems, arXiv., arXiv:2501.09628, (2025).

-

A.C.S. Robert Vincent, S. Sengan, Edge computing-based ensemble learning model for health care decision systems, Sci. Rep. 14 (2024) 26204.

[https://doi.org/10.1038/s41598-024-78225-5]

-

M.K.K. Perumal, R.R. Renuka, S.K. Subbiah, P.M. Natarajan, Artificial intelligence-driven clinical decision support systems for early detection and precision therapy in oral cancer: a mini review, Front. Oral Health 6 (2025) 1592428.

[https://doi.org/10.3389/froh.2025.1592428]

-

R.T. Sutton, D. Pincock, D.C. Baumgart, D.C. Sadowski, R.N. Fedorak, K.I. Kroeker, An overview of clinical decision support systems: benefits, risks, and strategies for success, NPJ Digit. Med. 3 (2020) 17.

[https://doi.org/10.1038/s41746-020-0221-y]

-

A. Choi, K. Lee, H. Hyun, K. J. Kim, B. Ahn, K. H. Lee, et al., A novel deep learning algorithm for real-time prediction of clinical deterioration in the emergency department for a multimodal clinical decision support system, Sci. Rep. 14 (2024) 30116.

[https://doi.org/10.1038/s41598-024-80268-7]

-

I.I. Al Barazanchi, H.M. Al-Hashimi, A.K. Jassim, M.A. Mohammed, Optimizing the Clinical Decision Support System (CDSS) by Using Recurrent Neural Network (RNN) Language Models for Real-Time Medical Query Processing, Comput. Mater. Contin. 81 (2024) 4787–4832.

[https://doi.org/10.32604/cmc.2024.055079]

-

C.Y. Magnin, D. Lauer, M. Ammeter, J. Gote-Schniering, From images to clinical insights: an educational review on radiomics in lung diseases, Breathe 21 (2025) 230225.

[https://doi.org/10.1183/20734735.0225-2023]

-

X.L. Fang, Z.H. Wu, Q.Y. Chen, L.Q. Tang, S.S. Guo, H.Y. Mo, et al., A radiogenomic clinical decision support system to inform individualized treatment in advanced nasopharyngeal carcinoma, iScience 27 (2024) 110431.

[https://doi.org/10.1016/j.isci.2024.110431]

-

C. McGenity, T. Slide, M. Raza, A. Moore, S. Boyle, Artificial intelligence in digital pathology: a systematic review and meta-analysis of diagnostic test accuracy, NPJ Digit. Med. 7 (2024) 137.

[https://doi.org/10.1038/s41746-024-01106-8]

-

V. Brodsky, A. Williamson, L. Pantanowitz, Generative Artificial Intelligence in Anatomic Pathology, Arch. Pathol. Lab. Med. 149 (2025) 298–318.

[https://doi.org/10.5858/arpa.2024-0215-RA]

-

J.K. Sandhu, C. Sharma, A. Kaur, S.K. Pandey, A. Sinha, J. Shreyas, Development of a clinical decision support system for breast cancer detection using ensemble deep learning, Sci. Rep. 15 (2025) 1468.

[https://doi.org/10.1038/s41598-025-06784-2]

-

A.A. Bayor, J. Li, I.A. Yang, M. Varnfield, Designing Clinical Decision Support Systems (CDSS): A User-Centered Lens of the Design Characteristics, Challenges, and Implications: Systematic Review, JMIR Med. Inform. 12 (2024) e63733.

[https://doi.org/10.2196/preprints.63733]

-

A. Katzmann, O. Taubmann, S. Ahmad, A. Mühlberg, M. Sühling, H.M. Groß, Explaining clinical decision support systems in medical imaging using cycle-consistent activation maximization, Neurocomputing 458 (2021) 141–156.

[https://doi.org/10.1016/j.neucom.2021.05.081]

-

A. Rahman, M.S. Hossain, G. Muhammad, D. Kundu, T. Debnath, M. Rahman, et al., Federated learning-based AI approaches in smart healthcare: concepts, taxonomies, challenges and open issues, Cluster Comput. 26 (2023) 2271–2311.

[https://doi.org/10.1007/s10586-022-03658-4]

-

Z. Chen, N. Liang, H. Zhang, H. Li, Y. Yang, X. Zong, et al., Harnessing the power of clinical decision support systems: challenges and opportunities, Open Heart 10 (2023) e002432.

[https://doi.org/10.1136/openhrt-2023-002432]

Muhammad Saad Bin Tarique is currently pursuing his PhD in the Department of Artificial Intelligence Convergence at Chonnam National University, South Korea. He received his B.S. degree in Mechatronics Engineering from Universiti Teknologi Malaysia in 2021. His research focuses on dielectric elastomer actuators, soft robotics and sensors, biologically inspired robotic systems, soft mechanisms, and AI-integrated actuation technologies.

Usman Ali is a PhD student in the Department of AI Convergence at Chonnam National University, South Korea. He received his B.S. degree in Telecommunication from Hazara University, Pakistan in 2021, and his M.S. degree in Intelligent Electronics and Computer Engineering from Chonnam National University in 2024. His research interests include machine learning, deep learning (computer vision), semi supervised learning, and real-time AI systems.

Doyeon Bang is a professor at the Graduate School of Data Science and Department of AI Convergence at Chonnam National University, South Korea. He received his B.S. degree in Chemical Engineering and Physics from Yonsei University in 2007 and his Ph.D in Nano Medical Science (Interdisciplinary Program) from Yonsei University in 2012. Prior to joining Chonnam National University, he conducted postdoctoral research in Bioengineering at the University of California, Berkeley (BioPOETs Lab) and served as a senior researcher at the Korea Institute of Medical Micro Robotics (KIMIRo). His research focuses on AI-driven micro-robotics, 4D printing, and advanced biomedical devices, with an emphasis on minimally invasive diagnostics and therapeutics, smart functional materials, and intelligent actuation systems for nextgeneration healthcare applications.